Web crawling frameworks or web crawlers make web scraping easier and accessible to everyone. Each of these frameworks allows us to fetch data from the web just like a web browser and can help you save time and effort in solving the crawling task at hand. We will walk through the top open source web crawling frameworks and tools that are great for web scraping projects along with best commercial SEO web crawlers that can optimize your website and grow your business.

Open Source Web Crawling Tools & Frameworks

- Apache Nutch

- Heritrix

- StormCrawler

- Apify SDK

- NodeCrawler

- Scrapy

- Node SimpleCrawler

- HTTrack

[toc]

ScrapeHero Web Crawling Service

ScrapeHero is a leader is web crawling services and can crawl publicly available data at high speeds. We are equipped with a platform to provide you the best web scraping service. You do not need to worry about setting up servers or download any software. Tell us your requirements and we will manage the data crawling for you.

Apache Nutch

Apache Nutch is a well-established web crawler that is part of the Apache Hadoop ecosystem. It relies on the Hadoop data structures and makes use of the distributed framework of Hadoop. It operates by batches with the various aspects of web crawling done as separate steps like generating a list of URLs to fetch, parsing web pages, and updating its data structures.

Apache Nutch provides extensible interfaces such as Parse and Apache Tika. Nutch has integration with systems like Apache Solr and Elastic Search. It extends its custom functionality with its flexible plugin system which is necessary for most use cases, but you may spend time writing your own plugins.

Programming Language – Java

Pros

- Highly extensible and Flexible system for web crawling

- Implements search when combined with open source search platforms like Apache Lucene or Apache Solr

- Dynamically scalable with Hadoop

Cons

- Difficult to setup

- Poor documentation

- Some operations take longer, as the size of crawler grows

Heritrix

Heritrix is a web crawler designed for web archiving, written by the Internet Archive. It is available under a free software license and written in Java. The main interface is accessible using a web browser, and there is a command-line tool that can optionally be used to initiate crawls. Heritrix runs in a distributed environment. It is scalable, but not dynamically scalable. This means you must decide on the number of machines before you start web crawling.

Programming Language – Java

Pros

- Excellent user documentation and easy setup

- Extensible, good performance and decent support for distributed crawls

- Respects robot.txt

Cons

- Not dynamically scalable

StormCrawler

StormCrawler is a library and collection of resources that developers can leverage to build their own crawlers. The framework is based on the stream processing framework Apache Storm and all operations occur at the same time such as – URLs being fetched, parsed, and indexed continuously – which makes the whole data crawling process more efficient and good for large scale scraping.

It comes with modules for commonly used projects such as Apache Solr, Elasticsearch, MySQL, or Apache Tika and has a range of extensible functionalities to do data extraction with XPath, sitemaps, URL filtering or language identification.

Programming Language – Java

Pros

- Appropriate for large scale recursive crawls

- Suitable for Low latency web crawling

Cons

- Does not support document deduplication

Scrapy

Scrapy is an open source web scraping framework in Python used to build web scrapers. It gives you all the tools you need to efficiently extract data from websites, process them as you want, and store them in your preferred structure and format. Scrapy has a couple of handy built-in export formats such as JSON, XML, and CSV. It runs on Linux, Mac OS, and Windows systems.

Scrapy with Frontera

Frontera is a web crawling toolbox, that builds crawlers of any scale and purpose. It includes a crawl frontier framework that manages what to crawl. Frontera contains components to allow the creation of an operational web crawler with Scrapy. It was originally designed for Scrapy, it can also be used with any other data crawling framework.

Programming Language – Python

Pros

- Suitable for broad crawling and easy to get started

- Large developer community

- Easy setup and detailed documentation

- Active Community

Cons

- Does not handle JavaScript natively

- Does not run in a fully distributed environment natively

- Cannot be scaled dynamically

ScrapeHero Web Crawling Service

ScrapeHero is a leader is web crawling services and can crawl publicly available data at high speeds. We are equipped with a platform to provide you the best web scraping service. You do not need to worry about setting up servers or download any software. Tell us your requirements and we will manage the data crawling for you.

Apify SDK

Apify SDK is a Node.js library which is a lot like Scrapy positioning itself as a universal web scraping library in JavaScript, with support for Puppeteer, Cheerio, and more. It provides a simple framework for parallel crawling. It has a tool Basic Crawler which requires the user to implement the page download and data extraction. With its unique features like RequestQueue and AutoscaledPool, you can start with several URLs and then recursively follow links to other pages and can run the scraping tasks at the maximum capacity of the system respectively.

Programming Language – Javascript

Pros

- Supports any type of website

- Best library for web crawling in Javascript we have tried so far.

- Built-in support of Puppeteer

Learn More About Web Scraping:

NodeCrawler

Nodecrawler is a popular web crawler for NodeJS, making it a very fast data crawling solution. If you prefer coding in JavaScript, or you are dealing with mostly a Javascript project, Nodecrawler will be the most suitable web crawler to use. Its installation is pretty simple too. It features server-side DOM & automatic jQuery insertion with Cheerio (default) or JSDOM.

Programming Language – Javascript

Pros

- Easy installation

Cons

- It has no Promise support

Node SimpleCrawler

Node SimpleCrawler is a flexible and robust API for crawling websites. It can crawl very large websites without any trouble. This crawler is extremely configurable and provides basic stats on network performance.

Programming Language – Javascript

Pros

- Respects robot.txt rules

- Easy setup and installation

HTTrack

HTTrack is a free and open-source web crawler that lets you download sites. All you need to do is start a project and enter the URLs to copy. The crawler will start downloading the content of the website and you can browse at your own convenience. HTTrack is fully configurable and has an integrated help system. It has versions for Linux and Windows users.

Pro

- Highly configurable for multiple systems

Cons

- Not as easy to use as other products

- May take time to download an entire website

ScrapeHero Web Crawling Service

ScrapeHero is a leader in web crawling services and can crawl publicly available data at high speeds. We are equipped with a platform to provide you the best web scraping service. You do not need to worry about setting up servers or downloading any software. Tell us your requirements and we will manage the data crawling for you.

Commercial Web Crawling Tools

A good web crawler tool can help you understand how efficient your website is from a search engine’s point of view. The web crawler takes search engine ranking factors and checks your site against the list one by one. By identifying these problems and working on them, you can ultimately improve your website’s search performance. Today there are all-in-one comprehensive tools that can find SEO issues in a matter of seconds, presenting detailed reports about your website search performance.

Here is our list of commercial web crawling SEO tools.

- Screaming Frog

- DeepCrawl

- Wildshark SEO

- Sitechecker Pro

- Visual SEO Studio

- Dynomapper

- OnCrawl SEO Crawler

Screaming Frog

The Screaming Frog SEO Spider tool is a website crawler that will help improve onsite SEO. It is not a cloud-based software, you must download and install in on your PC. It is available for Windows, Mac, and Linux systems. Like other digital marketing tools, you can try out the trial version and crawl 500 URLs for free.

Screaming Frog will find numerous issues with your website and uncovers technical problems such as content structure, metadata, missing links, and non-secure elements on a page.

Pro

- Easy to use interface

- Crawls websites and exports data quickly

Con

- Does not run in the cloud

- Trial run is limited and paid versions are expensive

Deepcrawl

Deepcrawl is an SEO website crawler that provides a comprehensive SEO solution and finds a variety of SEO issues. It can perform audits, improve user experience, and find more information about your competitors. You can schedule crawls on an hourly, daily, weekly or monthly basis and get the data exported as reports in your inbox. Deepcrawl can help you improve your website structure, migrate a website, and offers a product manager to assign task to team members.

The monthly plan ‘Light’ starts at $14 for one project with a limit of 10K URLs per month. ‘Lite Plus’ is $69 and lets you have 3 projects and a limit of 30K URLs. You can also get your own custom enterprise plan.

Pros

- Provides scheduled crawls and audits

- Can crawl millions of pages

- Integrates with Google Analytics and Search Console

Con

- Large audits can be slow

- Enterprise plans are slightly expensive

Sitechecker Pro

Sitechecker Pro by the name is an SEO site checker that offers a clean way to improve your website’s SEO. The tool can help you find issues with metadata, headings, and external links. It also provides a way to improve domain authority with link building and on-page optimization. SiteChecker Pro has a plugin and also provides a Chrome Extension.

The monthly plan ‘Lite’ starts at $9 to crawl 500 URLs. The ‘Startup’ plan is $29 to crawl up to 5 websites and 1,000 URLs per website. The ‘Growing’ plan is $69 to crawl 10 websites and 5K URLs per website.

Pros

- Provides comprehensive site audit

- Easy navigation and smooth interface

Cons

- Does not provide detailed information or suggestions to improve SEO

- Slower compared to other SEO tools

Dynomapper

Dynomapper is a dynamic website crawler that can improve your website’s SEO and website structure. The tool creates sitemaps with its Dynomapper site generator and performs site audits. With the site generator tool, you can quickly discover your process to optimize your website. It also provides content audits, content planning, and keyword tracking. The crawled data can be exported into CSV and Excel formats or you can schedule the data weekly or monthly.

The standard plan is $49/month where you get 25 projects and 5K URLs per crawl. The ‘Organization’ plan is $199/month for 50 projects and 25K pages per crawl.

Pro

- Provider a deeper content analysis as compared to other SEO tools

- Good for project management

Con

- The price may be high for its functionality

OnCrawl SEO Crawler

OnCrawl provides an SEO website crawler that audits your site to help develop an SEO strategy. The crawler traverses the pages on your site and identifies and logs the SEO issues it discovers. The crawlers will evaluate sitemaps, paginations, and canonical URLs and search for bad status codes. It will also examine the content quality and helps you determine a good loading time for a URL. Oncrawl helps you prepare for your mobile audience and lets you compare crawl reports so you can track your improvement over time. You can export your reports in CSV, PNG, and PDF formats.

Pro

- Good comprehensive SEO crawler

- Better support for mobile audiences

Con

- Not great for keyword research and tracking

- Reports may be a bit technical for beginners

Visual SEO Studio

Visual SEO Studio has two versions, a paid and a free one. The free version can crawl a maximum of 500 pages and find issues such as page titles, metadata, broken links, and robot.txt directives. It offers suggestions to improve SEO and generate XML sitemaps for your site. The paid version is 140€ per year and can crawl 150K pages.

Pro

- Simple Interface

- Good for beginners

Cons

- Crawling limits are based on your hardware

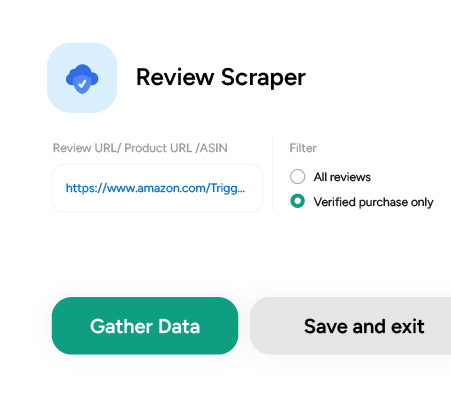

Don’t want to code? ScrapeHero Cloud is exactly what you need.

With ScrapeHero Cloud, you can download data in just two clicks!

Wildshark SEO Spider Tool

The WildShark SEO spider tool is a basic free SEO tool to find site issues and improve usability. To download their tool you will have to go to their website and fill out a form. To crawl a website you just need to type in the website URL and hit the start button. Wildshark will give you the overall health of your site, find competitor keywords, missing titles, and broken links. You download the crawled data and export it as a report.

Pro

- Good for basic SEO audits

- A free tool without crawl limitations

Cons

- Only available for Windows desktop

- The product is still in its nascent stages

If you have greater scraping requirements or would like to scrape on a much larger scale it’s better to use enterprise web scraping services like ScrapeHero. We are a full-service provider that doesn’t require the use of any tools and all you get is clean data without any hassles.

We can help with your data or automation needs

Turn the Internet into meaningful, structured and usable data