In today’s digital age, data is the essence of decision-making and strategic planning across industries. The ability to extract and interpret this vast reservoir of information can set businesses apart.

However, with the exponential growth of data on the web, manually extracting relevant information is a challenge. This raises an important question: what are the tools that are used to extract big data effectively?

The answer is data extraction tools and software. These web scraping tools efficiently automate the process of gathering and refining data from diverse sources. They not only save valuable time and resources but also enhance accuracy and reliability in data analysis.

Recognizing their importance, we have curated a list of the 10 best data extraction tools and software for 2024, evaluating them based on their functionality, ease of integration, and user-friendly interfaces.

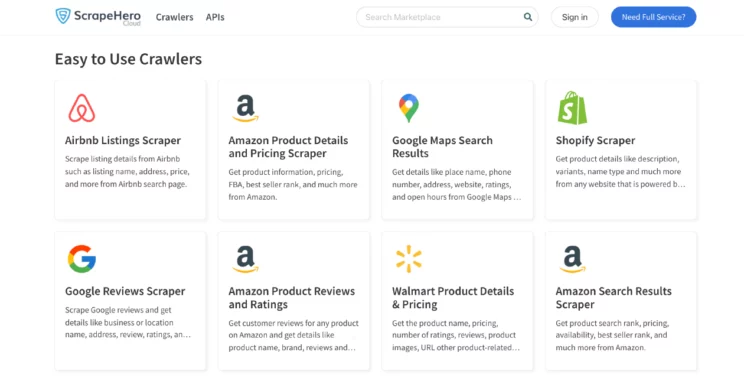

ScrapeHero Cloud

ScrapeHero Cloud is a cloud-based platform that simplifies the process of collecting information from various websites. The data extraction tools it offers have a user-friendly interface and robust functionalities that accommodate both novice and experienced users. It stands out for its efficiency and scalability, making web scraping accessible to a wide audience.

Key Features

- Point and Click Interface: ScrapeHero Cloud offers an intuitive point-and-click interface, allowing users to easily select the data they wish to extract without writing a single line of code.

- Pre-built Crawlers: Access to a wide range of pre-built crawlers for popular websites, enabling users to start collecting data instantly.

- Real-time APIs: Easy-to-use APIs that fetch real-time data from web pages like Amazon, Walmart, etc.

- Scheduled Scraping: Users can schedule their scraping tasks to run automatically at specific intervals, ensuring timely data collection without manual intervention.

- Customization: For websites not covered by pre-built crawlers, ScrapeHero Cloud offers custom web scraping services tailored to specific data extraction needs.

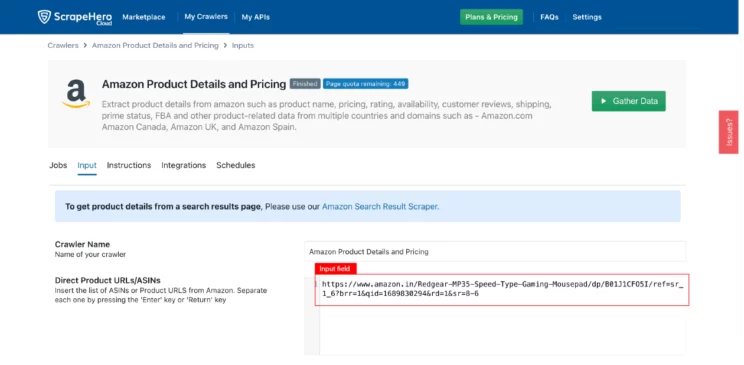

Steps to Set Up a Scraper on ScrapeHero Cloud

- Create an account

Don’t want to code? ScrapeHero Cloud is exactly what you need.

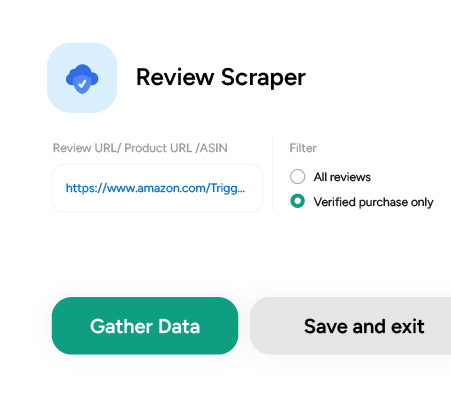

With ScrapeHero Cloud, you can download data in just two clicks!

- Select the scraper you wish to run.

- Provide input and click ‘Gather Data.’ The crawler will then be up and running.

Available Data Formats

ScrapeHero Cloud supports multiple data formats, providing flexibility in how the extracted data is utilized. The available formats include:

- CSV: Ideal for spreadsheet applications and simple data analysis.

- JSON: Suitable for web applications and data interchange.

- Excel: Offers a familiar format for business analysts and non-technical users.

- XML: Useful for complex data structures or integration with certain types of software.

Pricing

ScrapeHero Cloud employs a tiered pricing model designed to accommodate the varying needs and budgets of its users. While specific pricing details may vary, the structure typically includes:

- Free Tier: Offers limited access to pre-built crawlers and a certain amount of monthly data points, suitable for small-scale or trial use.

- Paid Plans: Range from basic to enterprise levels, with increasing numbers of data points, concurrent crawls, and advanced features like API access and custom crawler development. Pricing may start from a nominal monthly fee and scale up based on usage and service levels.

This flexible pricing strategy ensures that ScrapeHero Cloud is accessible to individuals and organizations of all sizes, from startups to large enterprises, making it a versatile choice for various data extraction tasks.

Web Scraper.io

Web Scraper.io is a browser-based tool designed for data extraction from web pages. It allows users to create sitemaps to navigate and extract data from websites.

Key Features

- Chrome Extension: Accessible as a Chrome extension, facilitating straightforward setup and use.

- Visual Sitemap Builder: Enables the creation of sitemaps visually to define what data to scrape and from where.

- Data Preview: Allows users to preview data before extraction.

- Cloud Scraping: Offers cloud services for running scrapers without using local resources.

Cons

- Limited to Chrome, which might not suit all users.

- Cloud scraping and some features require a subscription.

Pricing

Web Scraper.io offers a tiered pricing model, including:

- Free Version: Limited features with access to the Chrome extension and basic scraping functionalities.

- Paid Subscriptions: Provide enhanced features like cloud scraping, API access, and higher rate limits. Pricing varies based on the plan and features included, designed to suit different usage levels and requirements.

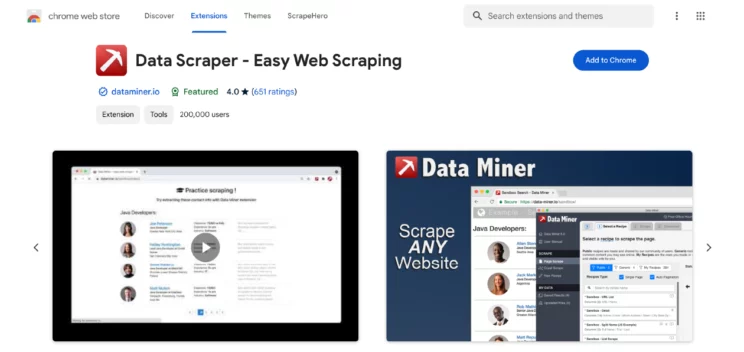

Data Scraper

Data Scraper is a data extraction tool available as a browser extension, making it accessible directly from the web browser.

Key Features

- Easy-to-use Interface: Simplifies the process of setting up and executing web scraping tasks.

- Automatic Data Extraction: Automatically identifies and extracts data from web pages.

- Customizable Data Selection: Allows users to customize the data fields they wish to extract.

- Pagination Handling: Capable of navigating through pagination to collect data across multiple pages.

Cons

- Limited to the browser, potentially restricting its use across different platforms or environments.

- May require manual adjustments for complex websites or data structures.

- Free version comes with limitations on features and the amount of data that can be extracted.

Pricing

Data Scraper offers a simple pricing structure, including:

- Free Version: Provides basic functionality with limitations on the number of pages and data rows that can be scraped.

- Paid Plans: Offer increased limits and additional features such as advanced data extraction options and support. Pricing details vary based on the level of functionality and support required by the user, aiming to accommodate a range of budgets and needs.

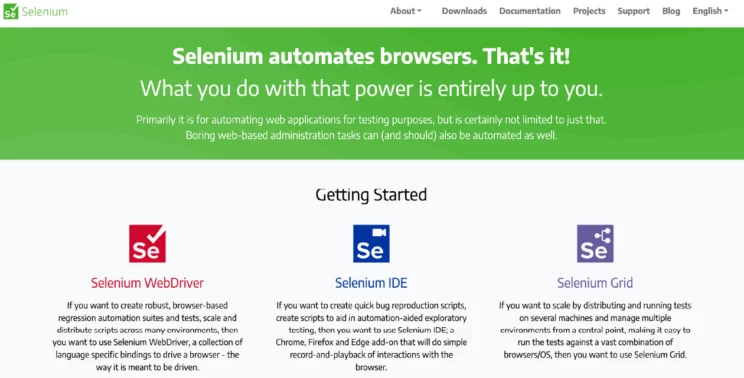

Selenium

Selenium is an open-source tool primarily used by experienced developers.

Key Features

- Cross-Browser Compatibility: Selenium supports all major browsers, enabling tests to be run across Chrome, Firefox, Safari, Internet Explorer, and Edge.

- Multi-Language Support: Offers bindings for several programming languages, including Java, C#, Python, Ruby, and JavaScript.

- Selenium WebDriver: Directly communicates with the browser, allowing for more complex and interactive tests that mimic real-user actions.

- Selenium Grid: Enables simultaneous execution of tests across different browsers and environments.

Cons

- Learning Curve: Beginners may find Selenium’s wide range of functionalities overwhelming, requiring a significant investment of time to master.

- Setup Complexity: Initial setup, especially of Selenium Grid for parallel testing, can be complex and time-consuming.

- No Built-In Image Testing: Selenium does not natively support image-based testing, requiring integration with third-party tools for visual testing needs.

Pricing

Selenium is an open-source tool and is available at no cost.

Scrapy

Scrapy is an open-source framework for extracting data from websites.

Key Features

- Flexible Scrapy Shell: Offers an interactive shell for testing XPath or CSS expressions on the fly, enhancing the data extraction process.

- Built-in Support for Selecting and Extracting Data: Uses XPath and CSS selectors, simplifying the process of pinpointing and extracting data.

- Extensible: Allows for adding custom functionality through plugins; this includes middleware and pipelines for processing data.

- Built-in Support for Output Formats: Facilitates the export of scraped data into various formats.

- Robust Encoding Support: Automatically handles the encoding of the scraped data, ensuring data integrity.

Cons

- Steeper learning curve compared to some browser-based scraping tools.

- Requires programming knowledge, primarily in Python, which might be a barrier for non-developers.

- Setup and deployment can be complex, especially for beginners.

Pricing

As an open-source tool, Scrapy is available for free. There are no licensing fees or subscriptions required to use the framework. However, costs may arise indirectly, such as for server hosting, if you’re running large-scale scrapings or deploying spiders to the cloud.

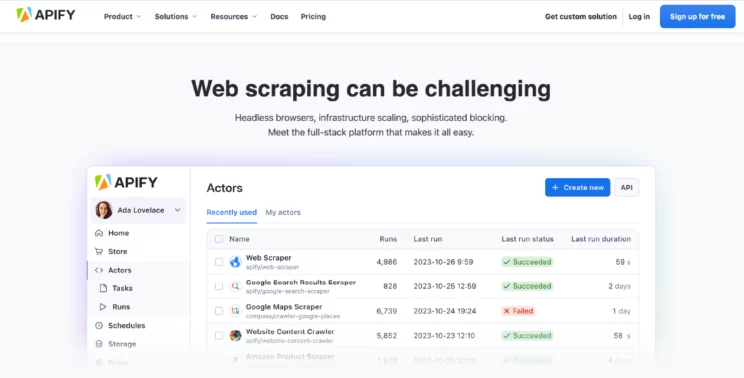

Apify

Apify is a cloud-based platform that provides web scraping and automation services to transform websites into API.

Key Features

- Actor Model for Web Scraping: Utilizes actors, which are cloud-native web scraping and automation jobs, for scalable and efficient data extraction.

- Scheduler: Allows for the scheduling of actors to run at specific times or intervals, automating data collection processes.

- Integrated Data Storage: Offers a built-in data storage solution, enabling the easy handling and storage of extracted data.

- Proxy Management: Provides proxy services to avoid IP blocking and manage requests over multiple IP addresses.

Cons

- It may require a learning curve to fully utilize its advanced features and actor model.

- Pricing can be higher for extensive usage or large-scale projects, compared to basic scraping tools.

- Dependence on cloud infrastructure means users must have internet access for operations.

Pricing

Apify offers a tiered pricing model, including:

- Free Plan: Provides access to basic features with limited resources, suitable for small projects or evaluation purposes.

- Paid Plans: Offer increased resource limits, priority support, and additional features, designed to meet the needs of more demanding users and projects. Pricing varies based on usage, with options for monthly subscriptions and pay-as-you-go plans to accommodate different scales of operation and budget constraints.

Dexi.io

Dexi.io is a cloud-based web scraping and data processing platform that enables you to extract and transform data from a web source.

Key Features

- Robust Web Scraping: Offers tools for both visual and code-based data extraction.

- Data Processing and Integration: Allows for the transformation and integration of scraped data into databases or web services.

- Real-Time Data Extraction: Supports data extraction in real-time for up-to-the-minute accuracy.

- Collaboration Tools: Facilitates team collaboration with shared projects and workflows.

Cons

- It can be complex for beginners due to its extensive features.

- Pricing may be higher than simpler tools.

Pricing

Dexi.io offers a tiered pricing model, including a free trial for new users. Paid plans vary based on features, data volume, and support levels, catering to a range of needs from small projects to enterprise solutions.

Mozenda

Mozenda is a data extraction software that automates the collection of web data. It emphasizes ease of use with a point-and-click interface.

Key Features

- Visual Data Extraction: Enables users to easily select data points using a visual interface.

- Data Collection Automation: Automates the process of collecting data from multiple web pages or sources.

- Agent Builder: Allows for the creation of custom agents to navigate and extract data from complex websites.

- Cloud Storage: Provides cloud-based storage for collected data, ensuring accessibility and security.

Cons

- Pricing can be high for small businesses or individual users.

- Limited customization options for complex scraping requirements.

Pricing

Mozenda operates on a subscription model, with pricing based on the number of pages scraped and the number of concurrent agents. Detailed pricing is available upon request, with options designed to fit various business sizes and data needs.

Diffbot

Diffbot is an AI-powered web scraping tool that uses advanced machine learning and computer vision technologies to extract data from web pages automatically.

Key Features

- Automatic APIs: Offers pre-built APIs for extracting data from articles, products, and more.

- Custom APIs: Allows users to create custom extraction rules for specific needs.

- Knowledge Graph: Builds a vast database of connected data points for comprehensive analysis.

- Scalability: Engineered for high-volume data extraction and processing.

Cons

- It may require a higher budget, especially for access to the knowledge graph and custom APIs.

- The complexity of AI technologies might present a learning curve.

Pricing

Diffbot offers a tiered pricing structure, including a free trial. Paid plans are based on API call volumes and access to advanced features like the knowledge graph.

ScraperAPI

ScraperAPI is a web scraping API and proxy solution designed to handle data extraction from even the most challenging websites. It automatically manages IP rotations, CAPTCHAs, and geo-locations, enabling efficient and reliable web scraping without the typical roadblocks.

Key Features

- Automatic Proxy Management: Rotates IP addresses and manages CAPTCHAs, preventing blocks and enhancing your data extraction success rate.

• JavaScript Rendering: Effortlessly scrape dynamic websites that rely on JavaScript for content.

• Flexible Integration: Simple RESTful endpoints make integrating with numerous programming languages and frameworks easy.

• Advanced Request Controls: Supports custom headers, cookies, and geo-targeting to tailor your web scraping tool setup.

Cons

- Focuses primarily on API-based workflows, so users seeking point-and-click interfaces may need additional tools.

• Complex projects may require some developer knowledge to configure optimally.

Pricing

ScraperAPI uses a tiered pricing structure, with plans based on the number of API requests and bandwidth. A free trial is available for new users, allowing them to explore essential features such as rotating proxies and JavaScript rendering

Import.io

Import.io is a web data integration service that allows users to convert web data into structured, machine-readable data.

Key Features

- Point-and-Click Interface: Simplifies the data extraction process with a user-friendly visual interface.

- Data Transformation: Offers tools for cleaning and transforming scraped data.

- API Integration: Enables the integration of extracted data with other applications or databases.

- Large-Scale Scraping: Designed to handle large volumes of data and complex scraping tasks.

Cons

- Pricing may be prohibitive for small businesses or individual projects.

- Some complex websites may require advanced setup or customization.

Pricing

Import.io offers custom pricing based on the scale of the project and the specific needs of the user. This includes options for small projects as well as enterprise solutions, with detailed pricing available upon request.

What is the Best Data Extraction Software or Tool?

Selecting the best data extraction tool or software is an important step in utilizing the power of big data. In this blog, we’ve explored a range of both free and paid data extraction tools and software, each with its unique features, strengths, and limitations.

From cloud-based platforms like ScrapeHero Cloud to browser extensions such as Data Scraper and Web Scraper.io, the variety ensures there’s a tool out there to meet the specific needs of any project, regardless of its scale or complexity.

However, choosing the best data extraction tool is subjective and heavily dependent on individual requirements such as the volume of data, the complexity of websites, budget constraints, and the need for customization.

For businesses facing the challenges of extracting vast amounts of data and requiring tailored solutions, ScrapeHero web scraping service is a compelling choice. Apart from offering pre-built crawlers, ScrapeHero also provides custom web scraping services.

Whether you’re looking to gather market research, monitor competitor pricing, or aggregate news content, we can handle big data needs with a level of customization that generic tools can’t handle.

We can help with your data or automation needs

Turn the Internet into meaningful, structured and usable data