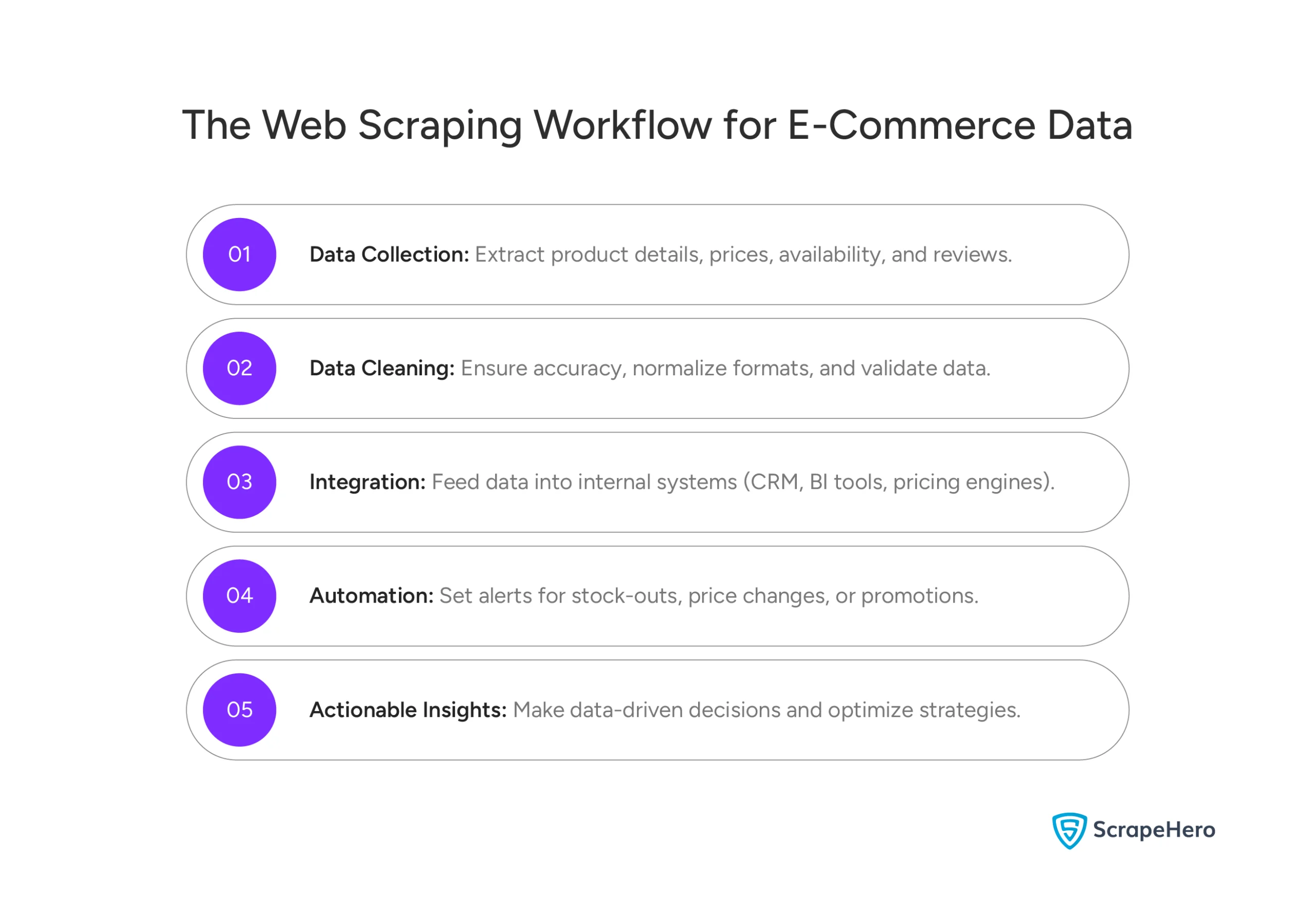

When you do e-commerce web scraping right, it becomes part of your daily operations. It provides insights that help you make pricing decisions. It catches MAP violations early. It shows you visibility problems before sales drop. And it brings up customer issues while you can still fix them.

Hence, finding the best web scraping services for e-commerce is essential for staying competitive.

This guide helps you understand what makes a web scraping service ready for e-commerce. You’ll learn how to evaluate providers based on business results—not just tools. And you’ll see which solutions work best for your scale, team setup, and goals.

Best Web Scraping Services for E-Commerce Companies

Why worry about expensive infrastructure, resource allocation and complex websites when ScrapeHero can scrape for you at a fraction of the cost?Go the hassle-free route with ScrapeHero

What Makes a Web Scraping Service E-Commerce-Ready

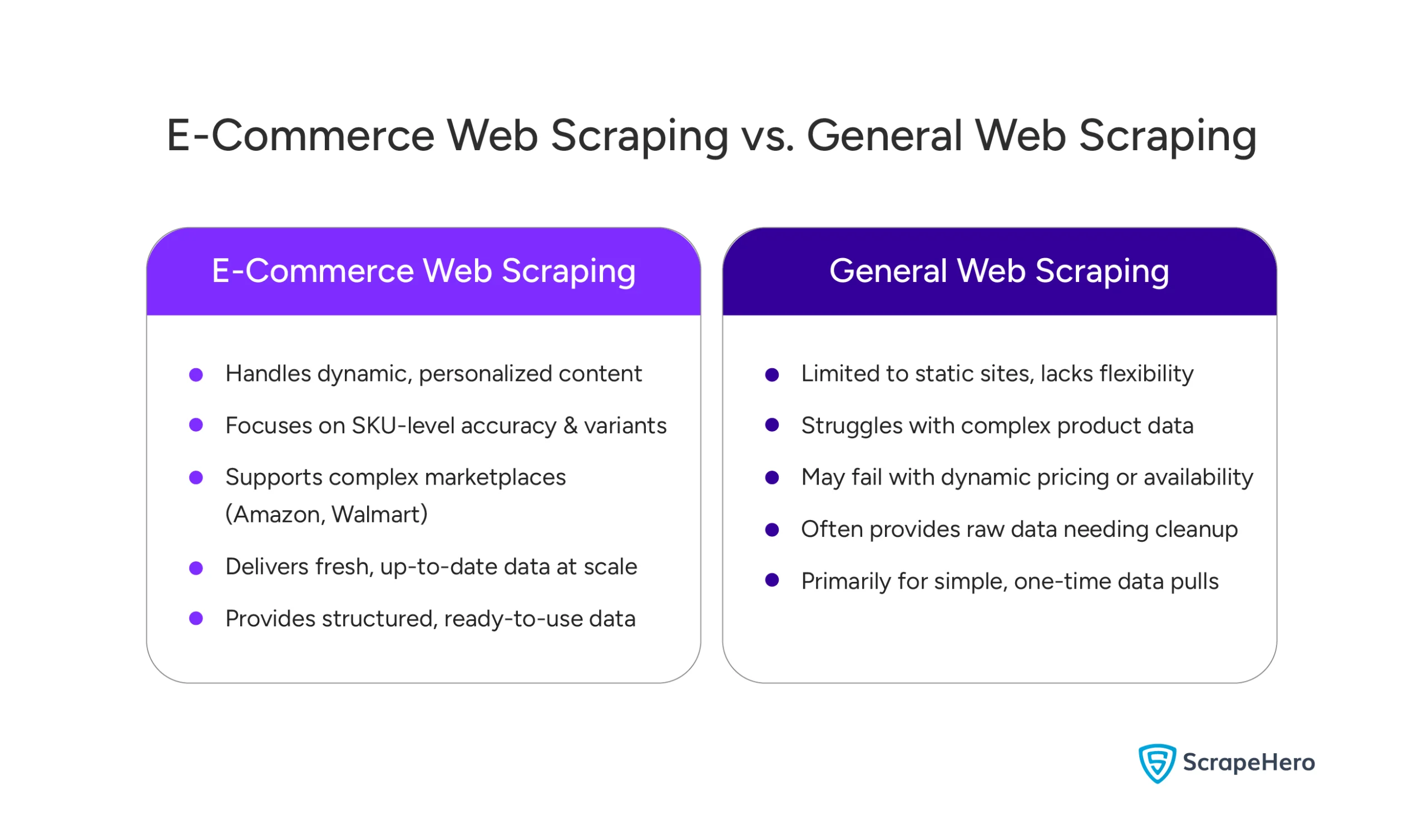

Not every web scraping service works well for e-commerce. Many tools/services scrape blogs, news sites, or simple directories just fine. But they fail when applied to online retail. That’s why web scraping services for e-commerce companies need to meet specific technical and business requirements.

E-commerce scraping is harder by design. Product pages change all the time. Marketplaces use layouts that shift constantly. Your business decisions need clean, consistent data over time. One-time pulls don’t cut it. That’s why general web scraping and e-commerce scraping aren’t the same thing.

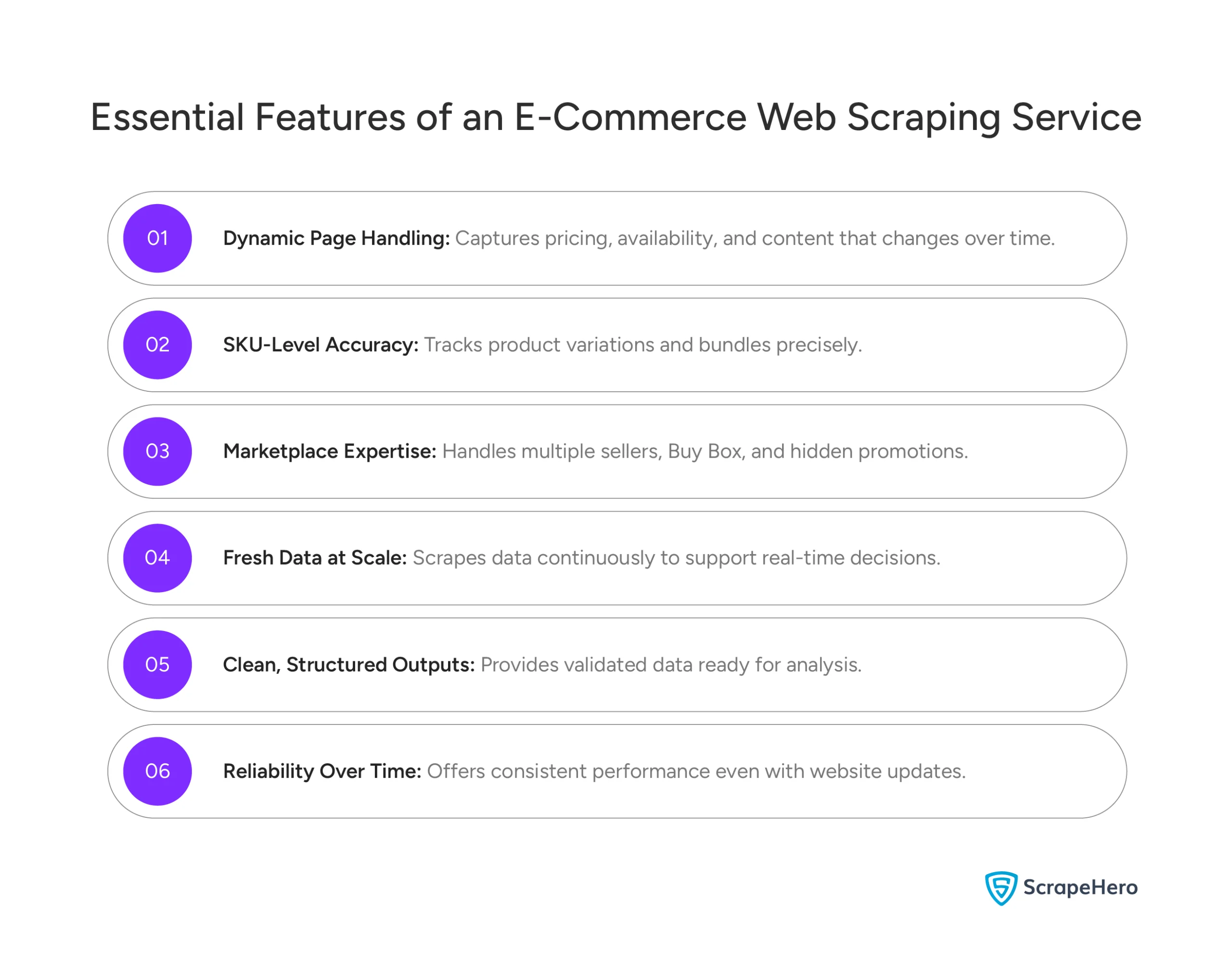

Here are the questions that separate an e-commerce-ready scraping service from a basic one.

- Can It Handle Dynamic E-Commerce Pages?

- Does it Provide SKU-Level Accuracy?

- Can It Support Marketplace Complexity?

- Does It Provide Fresh Data at Scale?

- Are the Outputs Clean and Structured?

- Is It Reliable in the Long Term?

Can It Handle Dynamic E-Commerce Pages?

Modern e-commerce sites use JavaScript heavily. They use dynamic pricing modules and personalized content. Prices, availability, and promotions often load at different times. Or they change based on location and user context. If a service can’t reliably render and pull this data, it will miss critical signals. Or it will give you incomplete results.

Does it Provide SKU-Level Accuracy?

E-commerce decisions happen at the SKU level. Variants, pack sizes, flavors, and bundles need to be mapped correctly. A scraping service must distinguish among similar products. It needs to normalize variations. And it needs to keep consistent SKU identifiers across runs. Without this, your pricing comparisons become unreliable. So do your MAP monitoring and availability tracking.

Can It Support Marketplace Complexity?

Marketplaces like Amazon, Walmart, and Target add layers of complexity. You’ve got multiple sellers per listing. You’ve got rotating Buy Box winners. You’ve got hidden promotions, regional availability, and constant layout changes. An e-commerce-ready web scraping service understands these structures. It captures the right data fields without breaking when marketplaces update their pages.

Does It Provide Fresh Data at Scale?

E-commerce data loses value quickly. Prices change daily. Stock statuses shift hourly. Reviews pile up constantly. A service needs to support scheduled, high-frequency scraping. It needs to handle thousands of SKUs without slowing down or leaving gaps. One-time or random scraping might look fine in a demo. But it fails when you need it every day.

Are the Outputs Clean and Structured?

Raw HTML isn’t useful for e-commerce teams. Data needs to arrive structured, validated, and ready for analysis. That means prices, discounts, availability, seller names, ratings, and review text in consistent formats. The less time your team spends cleaning data, the faster you can act on it.

Is It Reliable in the Long Term?

E-commerce scraping is ongoing. It’s not project-based. Sites change layouts. They add bot protection. They modify page logic regularly. An e-commerce-ready web scraping service handles this through built-in monitoring, maintenance, and adaptation. Reliability over months and years matters far more than a single successful scrape.

In short, e-commerce-ready scraping services are built for business continuity and decision-making. Generic scraping tools are built just for data extraction. That difference determines whether your scraped data becomes a strategic asset or just another broken feed. When evaluating the best web scraping services for e-commerce, these capabilities should be non-negotiable.

How We Evaluated the Best Web Scraping Services for E-Commerce

This guide uses publicly available information. We looked at documented capabilities, published case studies, and customer references.

The goal isn’t to pick a technical winner. We want to help you understand which services actually work for e-commerce workflows. Our evaluation is based on how these platforms are positioned, used, and supported in practice.

Here are the criteria we used to evaluate each service.

- E-Commerce Coverage

- Data Accuracy and Consistency

- Scale and Frequency Support

- Ease of Use

- Compliance and Responsible Scraping

- Support and Service Model

E-Commerce Coverage

We checked whether providers explicitly support major marketplaces. That includes Amazon, Walmart, and Target. We also looked at direct-to-consumer sites. Services designed only for generic websites or limited domains scored lower on e-commerce readiness.

Data Accuracy and Consistency

We used public documentation and customer examples. We looked at how providers handle SKU-level accuracy, product variants, seller attribution, and repeatability over time. For e-commerce teams, consistency across runs matters more than one successful extraction.

Scale and Frequency Support

We evaluated whether a service is designed for continuous, high-volume ecommerce tracking. Or is it mainly for one-off scraping projects?

Ease of Use

We considered how services are positioned. Are they for business users or developers? We looked at onboarding needs, data delivery formats, and whether you need ongoing technical help.

Compliance and Responsible Scraping

We reviewed how providers publicly address robots.txt, site terms, data privacy, and responsible data collection. Services that clearly explain compliance practices and risk mitigation are rated higher.

Support and Service Model

We checked whether providers offer managed services, monitoring, and ongoing maintenance. Or are you expected to handle scraping logic and failures yourself as sites change?

This approach keeps the evaluation grounded, transparent, and aligned with how e-commerce teams actually choose scraping partners. It’s based on fit, sustainability, and business impact rather than isolated performance claims. We’ve focused on e-commerce web scraping services that deliver real operational value.

Best Web Scraping Services for E-Commerce Companies

- ScrapeHero

- Bright Data

- Oxylabs

- Zyte

- ScraperAPI

- Apify

- PromptCloud

1. ScrapeHero

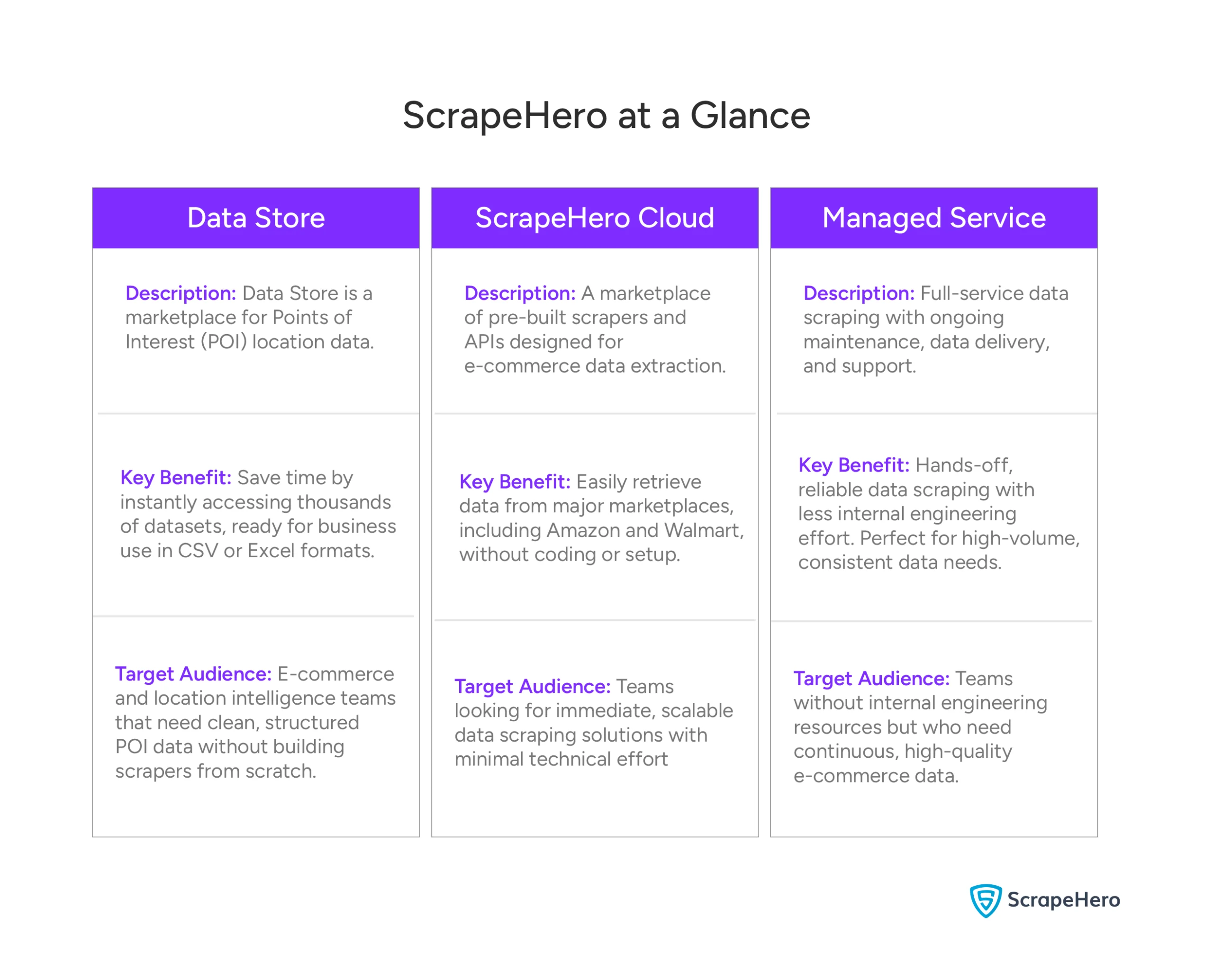

ScrapeHero stands out as a hybrid e-commerce data solution. It combines managed data delivery, custom APIs, pre-built scrapers, and a data marketplace. This supports both technical and non-technical teams. It’s not just about scraping pages.

It’s about delivering clean, structured, business-ready data. This makes it one of the most reliable web scraping solutions for online stores across pricing, marketplace monitoring, catalog enrichment, and workflow automation.

What ScrapeHero Actually Does

ScrapeHero is built around multiple service pillars. Together, they address real e-commerce data needs.

- E-commerce Data Extraction

- Data Store

- ScrapeHero Cloud

- Web Scraping API

- Robotic Process Automation (RPA)

E-commerce Data Extraction

ScrapeHero’s web scraping service pulls structured data. That includes prices, stock levels, product details, seller information, and reviews. It works on major e-commerce sites and marketplaces. It supports both direct-to-consumer (DTC) sites and large marketplaces like Amazon, Walmart, Target, and others.

Key outputs:

- Price, discount, and promotion data

- SKU and variant-level availability

- Seller listings and Buy Box status

- Review text and rating attributes

Data Store

ScrapeHero’s Data Store is a marketplace for Points of Interest (POI) data. It offers accurate, updated, and ready-to-use location datasets covering thousands of brands globally. You can instantly download data on store openings and closures, parking availability, in-store pickup options, services, subsidiaries, nearest competitor stores, and more, all organized by geography and industry.

Delivery is available in CSV or Excel formats, saving you the time and effort of building scrapers from scratch for location intelligence or enrichment.

ScrapeHero Cloud

ScrapeHero Cloud is a comprehensive platform offering both ready‑made scrapers and enterprise‑grade e‑commerce APIs.

Ready‑Made Scrapers Marketplace

Instantly access prebuilt scrapers for major marketplaces, including Amazon and Walmart. With just a few clicks, you can collect product pricing, reviews, and availability without writing code.

Enterprise‑Grade E‑Commerce APIs

For organizations needing dedicated, scalable infrastructure, ScrapeHero Cloud hosts a large collection of enterprise‑grade e‑commerce APIs. These APIs are built for high‑volume data extraction from e-commerce sites.

Also, if you need a custom API for a specific e-commerce site, ScrapeHero can build one in as little as 3–5 business days.

Web Scraping API

For teams that want more control, ScrapeHero offers a custom Web Scraping API. It handles common technical challenges behind the scenes. That includes proxy rotation, rendering, and data extraction. This API supports e-commerce endpoints and general web harvesting. So you can integrate scraping into internal systems or workflows.

What this means:

- Flexible integration into analytics or BI pipelines

- Standardized API responses (JSON/CSV/XML)

- Handling of site changes and bot mitigation

Robotic Process Automation (RPA)

ScrapeHero’s Robotic Process Automation (RPA) connects web data extraction with automated workflows. It lets you move from raw data to action without manual steps. RPA can trigger actions based on extracted data. It integrates with your systems to run tasks on a schedule or when certain conditions are met. This helps ecommerce teams reduce manual work.

Value adds:

- Automated alerts and actions

- Scheduled workflows

- Integration with internal systems (CRM, ERP, pricing engines)

Where ScrapeHero Truly Excels

E-commerce Outcomes, Not Just Extraction

ScrapeHero isn’t positioned as a raw scraper or proxy layer. It’s meant to produce usable e-commerce datasets. This matters because teams can start analysis faster. There’s less manual cleanup and normalization. And data maps to business entities like SKUs, sellers, and promotions. Also, ScrapeHero ensures legal compliance of the entire process.

Managed Service with Optional API Control

Many e-commerce platforms force you to choose. It’s either managed services (less control) or APIs (more control, more maintenance). ScrapeHero bridges that gap. It offers a managed service for data delivery and maintenance. Plus an API layer for flexible integration when you need it. This hybrid model reduces your dependence on internal engineering while keeping your options open.

Best Fit

ScrapeHero is ideal for:

- Pricing, category, and marketplace teams needing business-ready ecommerce data

- Organizations without large internal scraping engineering teams

- Use cases where ongoing data quality, normalization, and automation matter

- Scenarios where output needs to feed dashboards, analytics, or automated workflows

2. Bright Data

Bright Data operates as an all-in-one web data platform. It combines large-scale proxy infrastructure, scraping APIs, and a fully managed service model called Managed Data Acquisition. This positions it differently from API-only or tooling-first providers.

Instead of just offering scraping tools, Bright Data acts like a data concierge. You define your goals. That might be pricing intelligence, competitive tracking, or market analysis. Then Bright Data collects, validates, enriches, and delivers structured datasets and insights.

Where Bright Data Excels

- Managed Data Acquisition

- Enterprise-Grade Infrastructure

- Structured Data and Insights Delivery

- Compliance and Governance

Managed Data Acquisition

Bright Data’s managed offering covers the full lifecycle of data collection. This includes source discovery, scraping, validation, enrichment, and delivery. Delivery happens through dashboards, reports, or structured feeds. For e-commerce teams, this removes the need to manage scrapers, proxies, or site changes internally.

Enterprise-Grade Infrastructure

Bright Data is backed by 150M+ IPs across 195 countries. It has advanced anti-bot and CAPTCHA handling. It uses AI-assisted data discovery. It’s built to support high-volume, multi-marketplace data extraction with strong uptime and scalability.

Structured Data and Insights Delivery

Data can be delivered daily, weekly, or monthly in custom formats. This includes historical datasets and cross-site comparisons. Bright Data also supports dashboards, analytics, and AI-driven enrichment. It goes beyond raw data delivery.

Compliance and Governance

Bright Data emphasizes alignment with GDPR and CCPA. It focuses on ethical data sourcing and adherence to website policies. This is an important consideration for large brands operating at scale.

Best For

Enterprise and large mid-market organizations. They need fully managed, SLA-backed ecommerce data collection. They operate across many retailers or geographies.

3. Oxylabs

Oxylabs positions itself as a multipurpose scraping platform with strong e-commerce capabilities. It combines a powerful Web Scraper API, an ecommerce-focused data offering, and large-scale proxy infrastructure. It works well for teams that want flexibility and performance. You can customize how e-commerce data is collected and processed.

What Oxylabs Offers for E-commerce Data

- Web Scraper API with AI-assisted parsing

- Flexible Extraction Modes

- Geographic Targeting and Proxy Support

- Performance Signals

Web Scraper API with AI-assisted parsing

Oxylabs’ Web Scraper API supports e-commerce data extraction across major marketplaces. That includes Amazon, eBay, and Google Shopping. The API includes an AI-based parser. It’s designed to structure product data from a wide range of e-commerce page types. It’s not limited to a single marketplace.

Depending on the target site, you can extract structured data from:

- Product detail pages

- Search and category listings

- Pricing and availability sections

- Reviews and ratings

This reduces manual parsing effort compared to APIs that return only raw HTML.

Flexible Extraction Modes

The API supports both real-time scraping for immediate data needs. And it supports asynchronous, batch-based collection for large-scale or scheduled jobs. Built-in crawling and scheduling capabilities are uncommon for API-first tools. They make it easier to run recurring ecommerce data collection without external orchestration.

Geographic Targeting and Proxy Support

Oxylabs supports country- and postal-code-level targeting across 195 locations. It’s backed by a large proxy network and anti-blocking mechanisms. This is particularly useful for ecommerce pricing and availability that vary by region.

Performance Signals

Oxylabs has published performance results. They achieve high success rates and competitive response times on e-commerce targets such as Amazon. These benchmarks indicate strong technical reliability. Though real-world performance will still depend on target sites, volumes, and configurations.

Where Oxylabs Fits Best

Oxylabs is a strong option when you need:

- API-driven access to e-commerce data with structured outputs

- Flexibility to customize downstream workflows

- Geographic targeting for regional pricing and assortment analysis

- Reliable infrastructure for high-volume scraping

Best For

Technical teams or mid-market to enterprise organizations. They want high-performance ecommerce scraping APIs, geographic flexibility, and control over data workflows. They accept the trade-off of higher internal ownership compared to fully managed e-commerce data services.

4. Zyte

Zyte positions itself as a data extraction company. It focuses on turning complex web pages into structured data. It emphasizes speed, reliability, and developer flexibility. It’s best known for maintaining the open-source Scrapy framework. Today, it offers both API-driven tools and managed data extraction services.

What Zyte Offers for E-commerce Scraping

- High-Performance Web Scraping API

- AI-Assisted Product Parsing

- Managed Data Extraction

- Compliance, Support, and Reputation

High-Performance Web Scraping API

Zyte’s Web Scraping API supports e-commerce sites out of the box. It’s optimized for speed and reliability. Published benchmarks show success rates above 98% on Amazon. It recorded the fastest average response times, around 6.61 seconds. This makes it one of the fastest e-commerce scrapers available.

The API supports:

- Automatic location selection based on target URLs

- Residential IPs from more than 200 countries

- Headless and non-headless scraping, depending on requirements

AI-Assisted Product Parsing

Zyte provides an AI parser for e-commerce product pages. It can extract structured fields like product titles, prices, availability, images, and descriptions. Parsing can still require manual configuration. But it reduces effort compared to working with raw HTML.

Managed Data Extraction

Beyond APIs, Zyte also offers managed scraping services. Its team builds, runs, and maintains custom data pipelines. Data is delivered in agreed schemas and formats. No internal engineering effort required from you.

Compliance, Support, and Reputation

– Explicit alignment with GDPR and global privacy regulations

– In-house legal expertise focused on web data compliance

– 24/7 enterprise support available

Best For

Engineering-led or data teams. They need fast, reliable, structured ecommerce product data. They value API flexibility. And they’re prepared to own the logic that turns extracted data into pricing, catalog, or competitive insights.

5. ScraperAPI

ScraperAPI is a self-serve web scraping API. It’s built to simplify large-scale data collection. It handles proxies, rendering, and anti-bot challenges behind the scenes. Its strength lies in speed, flexibility, and ease of integration. This makes it a popular choice for developers and data teams running custom e-commerce scraping workflows.

It’s not a managed e-commerce data service. Instead, ScraperAPI serves as a robust extraction layer that you can build on.

What ScraperAPI Offers for E-commerce Data

- General-Purpose Scraper with E-Commerce Endpoints

- Advanced Anti-bot Handling

- Flexible Integration Options

General-Purpose Scraper with E-Commerce Endpoints

ScraperAPI provides a general scraper that works across e-commerce sites. It also has dedicated endpoints for Amazon, Google Shopping, and Walmart. These endpoints can return parsed data for e-commerce product pages, listings, search results, and reviews using ASIN-based requests.

Advanced Anti-bot Handling

- Automatic IP rotation using datacenter, residential, and mobile proxies

- CAPTCHA bypassing and retry logic

- Smart proxy selection to reduce unnecessary rotation and control costs

This allows ScraperAPI to maintain high success rates on heavily protected e-commerce sites.

Flexible Integration Options

You can integrate ScraperAPI in multiple ways:

- REST APIs (open connection and asynchronous)

- Proxy server mode

- SDKs and libraries

- No-code dashboard interface

This makes it powerful enough for experts yet simple enough for smaller teams.

Best For

ScraperAPI is best suited for:

- Developer-led or data teams

- Custom e-commerce scraping projects and experiments

- Teams that want fast, flexible access to e-commerce pages

- Use cases where downstream processing is handled internally

For organizations that need business-ready ecommerce datasets, recurring monitoring, or minimal maintenance, managed or hybrid ecommerce-focused services may be a better fit.

6. Apify

Apify is a full-stack cloud platform. It’s for building, running, and managing automated web scraping workflows. It’s designed for teams that want custom logic and control, not pre-packaged ecommerce datasets. Apify supports both self-serve development and fully managed projects. It depends on how hands-on you want to be.

What Apify Does Well

- Apify Store

- Custom Ecommerce Workflows

- Automation and Orchestration

- Managed Scraping Service

- Compliance, Support, and Reputation

Apify Store

Apify offers a marketplace of pre-built scrapers for common targets. Examples include Amazon and eBay. This speeds up projects while keeping flexibility.

Custom Ecommerce Workflows

Apify shines when workflows require custom logic:

- Price comparison across multiple retailers

- Product matching across marketplaces using AI (useful when SKUs/IDs differ)

- Handling bundles, variants, and marketplace-specific structures

Automation and Orchestration

- Cloud-based execution with headless browsers (like Puppeteer)

- API access for scheduling, monitoring, and routing outputs

- Integrations to storage, queues, and downstream systems

Managed Scraping Service

For teams that don’t want to build or maintain scrapers, Apify also offers managed web scraping services. Dedicated engineers design, run, and maintain pipelines. Data is delivered in agreed schemas and destinations.

Compliance, Support, and Reputation

- Strong focus on ethical scraping and GDPR compliance

- NDAs and IP ownership retained by the customer

- Enterprise SLAs with dedicated project teams (EU/US time zones)

Best For

Technical and analytics teams. They need custom, automated e-commerce workflows. Especially for price comparison, AI-based product matching, and complex marketplace logic. They’re comfortable owning or commissioning custom scraping solutions.

7. PromptCloud

PromptCloud positions itself as a full-service web scraping partner. It delivers structured data without requiring internal engineering effort. Its focus on e-commerce, price crawling, and data enrichment makes it a good option for brands. Especially teams that want professional support and a predictable data pipeline.

What PromptCloud Offers for E-Commerce Data

- E-commerce Data Extraction Services

- Price Crawling and Analytics Readiness

- Managed Service Model

E-commerce Data Extraction Services

PromptCloud can scrape product details, prices, descriptions, availability, and reviews from e-commerce sites. The service supports both marketplaces and direct-to-consumer stores.

Price Crawling and Analytics Readiness

The platform emphasizes collecting e-commerce price data. This is for competitive monitoring and pricing intelligence. It delivers output in formats ready for analytics tools or internal dashboards.

Managed Service Model

Rather than handing you raw HTML or just APIs, PromptCloud’s team handles scraping configuration. They handle data extraction logic, quality checks, and delivery pipelines. This lowers the barrier for teams without internal data engineering bandwidth.

Where PromptCloud Fits Best

PromptCloud is a strong fit when you want structured e-commerce data delivered reliably. You don’t want to build or maintain scraping infrastructure. It blends managed scraping with data readiness. This means you don’t need to build parsers or cleaners. Data arrives in analytics-friendly formats. Team support helps ensure continuity as sites change.

Best For

E-commerce pricing, category, and analytics teams. They want a managed delivery of structured e-commerce data. They don’t want the overhead of building and maintaining internal scraping infrastructure.

Which Service Is Right for You?

The right scraping service depends on what decisions you’re trying to make. And how much operational load your team can carry. Use the guide below to map common e-commerce use cases to the service type that best fits each use case.

- Price and Promotion Monitoring

- MAP Compliance Tracking

- Review and Rating Analysis

- Assortment and Availability Tracking

- Competitor Catalog Intelligence

- Legal, Ethical, and Compliance Considerations

- Pricing Models

Price and Promotion Monitoring

If you track prices daily or weekly across marketplaces and DTC sites, you need SKU-level accuracy. You need consistent refreshes and historical tracking.

Best fit: Managed or hybrid services that deliver structured price and promo data on a schedule.

Watch out for: API-only tools that return raw pages and leave price parsing, normalization, and history to your team.

MAP Compliance Tracking

MAP monitoring requires more than price capture. You need seller identification. You need the Buy Box context. And you need alerts when violations occur.

Best fit: Services with marketplace awareness and ongoing monitoring.

Watch out for: Generic scrapers that don’t distinguish sellers or account for marketplace dynamics.

Review and Rating Analysis

Reviews change constantly and grow over time. You need fresh data, text extraction, and clean joins back to SKUs.

Best fit: Services that deliver structured review text, ratings, timestamps, and product identifiers.

Watch out for: Tools that scrape reviews once but don’t support recurring pulls or data joins.

Assortment and Availability Tracking

This use case breaks quickly if data isn’t refreshed or normalized correctly.

Best fit: Services that support frequent crawling, variant handling, and availability flags at scale.

Watch out for: One-off scraping jobs or tools that struggle with dynamic stock indicators.

Competitor Catalog Intelligence

Catalog tracking requires depth and continuity. Not just page access.

Best fit: Services that can map categories, variants, and product attributes consistently over time.

Watch out for: API tools that fetch pages but leave catalog modeling entirely to you.

Legal, Ethical, and Compliance Considerations

Scraping isn’t just a technical decision. For brands, how data is collected matters as much as what data is collected.

Key points to consider:

Robots.txt and site terms: Responsible providers clearly address how they approach publicly accessible data and site rules.

Privacy regulations: For US brands, considerations like the CCPA and consumer data handling should be part of the provider’s operating model.

Brand risk: Poor scraping practices can lead to blocked access, unreliable data, or reputational risk.

Practical takeaway:

Choose providers who openly discuss responsible scraping and long-term access. Not just success rates.

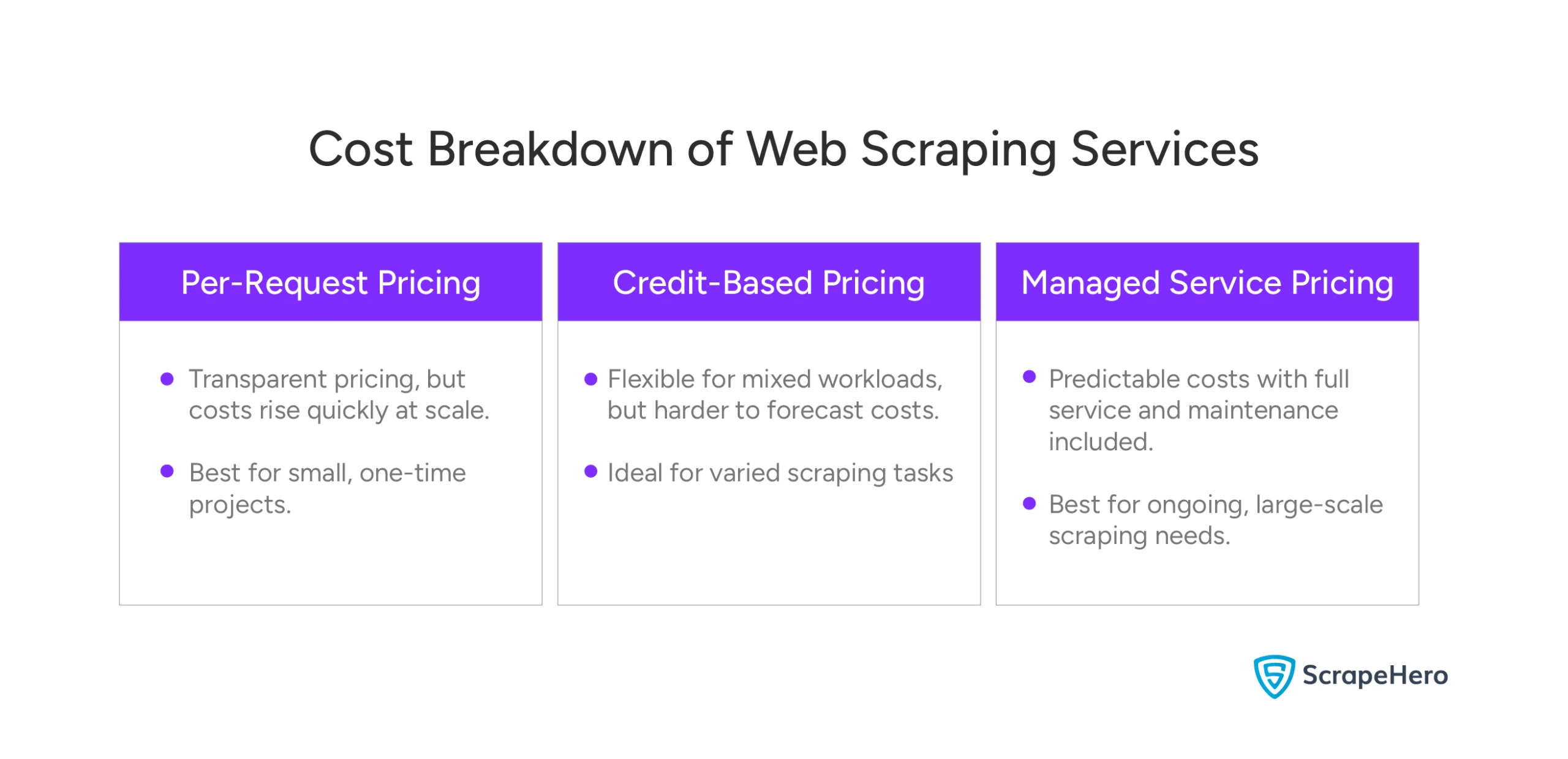

Pricing Models: What You’re Really Paying For

Scraping costs are often misunderstood. The headline price rarely reflects the full cost of ownership.

Per-Request Pricing

You pay for each page or request made.

Pros: Transparent at a small scale.

Cons: Costs can spike quickly with frequent monitoring or large catalogs. Doesn’t include data cleanup or maintenance.

Credit-Based Pricing

Requests, rendering, or computing are bundled into credits.

Pros: Flexible for mixed workloads.

Cons: Harder to forecast costs for ongoing ecommerce monitoring.

Managed Service Pricing

You pay for outcomes, not requests.

Pros: Predictable costs, structured data, maintenance included.

Cons: Less granular control over scraping mechanics.

Hidden Costs to Factor In

Even “cheap” APIs carry operational costs:

- Engineering time to build and maintain scrapers

- Breakages when sites change

- Data cleaning and normalization

- Missed decisions due to incomplete or late data

For many e-commerce teams, these hidden costs outweigh the difference in vendor pricing.

Choosing a Scraping Partner, Not Just a Tool

E-commerce scraping creates value only when it’s reliable and outcome-driven. One-off data pulls and fragile scripts don’t support pricing, marketplace, or brand decisions. What matters is consistent, accurate data that shows up on time and holds up as sites change.

Most teams eventually outgrow DIY scraping. What starts as a quick internal setup often turns into ongoing maintenance. You get broken scrapers, data cleanup, and engineering dependency. As catalogs grow and monitoring becomes continuous, scraping becomes an infrastructure component. Not a side project.

A specialized e-commerce scraping partner makes sense when you need:

- Ongoing visibility into prices, promotions, availability, and sellers

- SKU-level accuracy across marketplaces

- Structured data that teams can act on without heavy processing

At that point, the decision is less about tools. It’s more about who can deliver dependable ecommerce data at scale.

If your goal is to support pricing intelligence, MAP compliance, review tracking, or assortment monitoring, exploring an e-commerce-focused option like ScrapeHero is a practical next step. Choosing the best web scraping service for e-commerce ensures you get both reliability and business outcomes.

Why ScrapeHero Is the Best E-commerce Web Scraping Service

- Handles Complex Scraping Challenges

- Data Quality is a Core Product

- Human-Centered Service Model

- Built for Reliability and Results

ScrapeHero has built its reputation on serving thousands of customers—from fast-growing brands to Fortune 50 enterprises—with consistent, accurate data delivered month after month.

Handles Complex Scraping Challenges

ScrapeHero addresses technical obstacles such as CAPTCHA, JavaScript rendering, IP blocks, and layout changes. With self-healing infrastructure, you don’t waste time fixing broken scrapers.

Data Quality is a Core Product

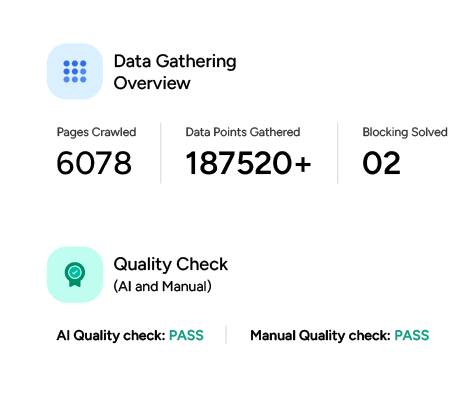

ScrapeHero combines AI-powered validation with manual checks to ensure outputs are ready for analysis—not raw dumps you have to clean yourself. Automated monitoring catches changes before they disrupt your pipeline, giving you confidence in price, inventory, and catalog intelligence.

Human-Centered Service Model

What sets ScrapeHero apart is its approach to customer support. Real experts respond quickly, help tailor solutions to your needs, and work with you as a partner rather than a vendor. Customization, flexible delivery formats, and honest pricing mean you avoid hidden costs and get data that fits your goals.

Built for Reliability and Results

Whether you need ongoing feeds or one-off insights, ScrapeHero combines technology, quality assurance, and support to make scraping practical, dependable, and directly tied to decision outcomes. That’s why it ranks among the best web scraping services for e-commerce available today.

Contact ScrapeHero to see how it can power your e-commerce data strategy.

FAQ

Web scraping services enable e-commerce businesses to automatically extract and analyze large volumes of data from competitor websites, product listings, and online marketplaces. This helps in tracking competitor pricing, inventory levels, product trends, and customer reviews, enabling businesses to make informed pricing and marketing decisions.

Web scraping helps e-commerce businesses by providing real-time access to competitive insights, market trends, and product data. It enhances decision-making with automated monitoring of pricing strategies, stock levels, and promotions, giving businesses a competitive edge in the market.

The best services for scraping competitor prices include ScrapeHero, Octoparse, and Bright Data. These platforms offer customizable scraping tools that efficiently gather pricing data across various e-commerce sites, allowing businesses to monitor competitor prices and adjust their own strategies accordingly.

When comparing e-commerce web scraping services, ScrapeHero stands out for its highly customizable, scalable solutions tailored to specific business needs. Unlike other services, ScrapeHero offers real-time data collection, handles complex websites with ease, and delivers accurate pricing insights, making it the go-to option for businesses seeking a competitive advantage in e-commerce.