Looking for the best web scraping tools in 2026?

The right web scraper automates data collection from any website — handling JavaScript rendering, bypassing bot detection, and delivering clean, structured output in CSV, JSON, or Excel. Whether you’re a developer building pipelines or a business analyst with no coding background, today’s tools cover every skill level and budget.

The sheer volume of data available on the web today makes manual collection not just impractical — it’s essentially impossible at any meaningful scale. The best web scraping tools solve this by automating the entire process of extracting, structuring, and storing large datasets quickly and reliably.

From tracking competitor prices to generating sales leads and powering market research, web data extraction software saves time, eliminates human error, and scales effortlessly across complex, JavaScript-heavy websites.

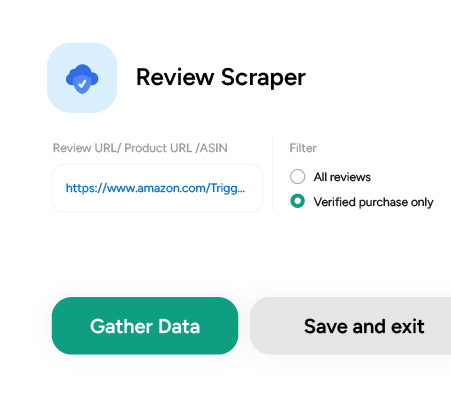

With ScrapeHero Cloud, you can download data in just two clicks!Don’t want to code? ScrapeHero Cloud is exactly what you need.

15 Best Web Scraping Software and Tools in 2026

Below is a curated roundup of the best web scraping tools available right now — spanning free, open-source, no-code, and enterprise-grade options — so you can find the right fit for your specific use case.

- ScrapeHero Cloud

- Web Unlocker- Bright Data

- Web Unblocker- Oxylabs

- Octoparse

- Scrapy

- Puppeteer

- Playwright

- Cheerio

- Parsehub

- Web Scraper.io

- Apify

- Browse AI

- SerpAPI

- Clay.com

- Selenium

1. ScrapeHero Cloud

When it comes to the best web scraping tools for teams that want results without infrastructure headaches, ScrapeHero Cloud is the standout choice.

ScrapeHero is an enterprise-grade data extraction service provider — and ScrapeHero Cloud is their self-service marketplace that brings that same enterprise power to individual users and small teams.

The platform offers pre-built crawlers and real-time APIs for the world’s most-scraped websites — Amazon, Google, Walmart, Zillow, and dozens more. You don’t write a single line of code; you just point, configure, and download your data.

What sets ScrapeHero Cloud apart from other best web scraping tools in its class is the combination of reliability, flexibility, and genuine ease of use.

You’re not wrestling with proxies, browser automation, or anti-bot countermeasures — ScrapeHero’s infrastructure handles all of it silently in the background.

Features

- Pre-built crawlers require zero technical expertise — if you can fill out a form, you can scrape a website.

- Flexible scheduling lets you run scrapers hourly, daily, or weekly, so your datasets stay current without manual intervention.

- Data exports in JSON, CSV, and Excel make it easy to plug results directly into your existing tools and workflows.

- Generous free tier plus affordable paid plans scale smoothly from individual researchers to enterprise data teams.

How to Use ScrapeHero Cloud

Here’s a quick walkthrough using the ScrapeHero Trulia Scraper as an example:

- Sign up or log in to your ScrapeHero Cloud account.

- Navigate to the Trulia Scraper in the marketplace.

- Click Create New Project.

- Paste in the Trulia search results URL for your target query.

- Enter your project name, URL, and desired record count — then hit Gather Data.

- Monitor progress in real time under the Projects tab.

- Once complete, open your project → Overview → Download Data → select Excel or CSV and you’re done.

The whole process takes under five minutes for a first-time user. That’s not marketing copy — it’s genuinely that straightforward.

Pricing

- ScrapeHero Cloud’s free Basic Plan includes 400 data credits, 1 concurrent job, 7-day data retention, 10 API calls per minute, and standard rotating proxies.

- Paid plans deliver up to 4,000 credits per dollar, and fully custom enterprise plans are available for high-volume or specialized requirements.

2. Web Unlocker- Bright Data

Bright Data’s Web Unlocker is a well-regarded web scraping tool built specifically for bypassing anti-bot defenses. Rather than exposing raw proxy infrastructure, it abstracts the entire unblocking layer so developers can focus on data, not evasion mechanics.

Features

- Manages browser fingerprinting, cookies, and CAPTCHA solving automatically.

- Routes requests through rotating residential IPs to avoid detection.

- Adapts in real-time as target websites update their blocking strategies.

- 24/7 live customer support for enterprise subscribers.

Pricing

Tiered model from pay-as-you-go to enterprise custom pricing. Entry price is $1.05 per 1,000 requests.

3. Web Unblocker — Oxylabs

Oxylabs’ Web Unblocker is an AI-augmented data extraction tool that handles the complexity of modern anti-scraping systems so you don’t have to. It’s a strong choice for developers who need a proxy-like interface with built-in intelligence.

Features

- Proxy-style integration with native JavaScript rendering support.

- Clean usage dashboard for tracking request volumes and success rates.

- Session persistence across multiple requests using the same proxy connection.

Pricing

One-week free trial available. Post-trial pricing begins at $75/month + VAT for 8 GB, billed monthly.

4. Octoparse

Octoparse is one of the most accessible best web scraping tools for non-technical users. Its visual, point-and-click workflow means you can build a fully functional scraper without writing a single line of code.

Features

- Scheduled cloud extraction keeps dynamic datasets refreshed automatically.

- Built-in Regex and XPath support for automated data cleaning post-extraction.

- Rotating IP proxies to handle reCAPTCHA and site blocking.

- Advanced mode gives experienced users fine-grained control over complex scraping logic.

Pricing

Free plan supports up to 10 tasks per account. Standard plan starts at $119/month. Enterprise plans are available on request.

5. Scrapy

Scrapy remains one of the most powerful and trusted open-source web scraping frameworks available. Built in Python, it gives developers full control over the entire crawl-and-extract pipeline — from request scheduling to data storage.

Features

- Asynchronous architecture built on the Twisted networking framework for high-throughput crawling.

- Native export to JSON, CSV, and XML.

- Extensive documentation, active community, and a rich ecosystem of plugins and extensions.

- Cross-platform: Linux, macOS, and Windows.

Pricing

Completely free and open-source.

6. Puppeteer

Puppeteer is a Node.js library that gives developers programmatic control over Google’s headless Chrome browser. It’s especially valuable for scraping dynamic, JavaScript-heavy pages that simpler HTTP-based tools can’t handle.

Features

- Open-source and ideal for pages where content is rendered via JavaScript or API calls.

- Screenshot and PDF generation for visual page capture.

- Automates complex browser interactions — form submissions, keyboard input, UI testing.

- Access to the latest Chrome browser features and JavaScript capabilities.

Pricing

Free and open-source.

7. Playwright

Playwright, developed by Microsoft, has quickly become a go-to tool among developers who need robust, cross-browser scraping and automation. It improves on Puppeteer with broader browser support and a more modern API.

Features

- Cross-browser support across Chromium, WebKit, and Firefox from a single codebase.

- Built to reduce test flakiness and speed up browser automation workflows.

- First-class integration with CI/CD platforms including Docker, Azure, CircleCI, and Jenkins.

Pricing

Free and open-source.

What’s changed: The best web scraping tools in 2026 have moved beyond static selectors. Leading platforms now use LLMs and Vision-Language Models to extract data by semantic intent — not fragile CSS or XPath rules — making scrapers dramatically more resilient to site redesigns. By the numbers (from our hands-on testing this quarter): Bottom line: Open-source tools like Scrapy and Playwright remain solid foundations. But for zero-maintenance, enterprise-scale extraction, AI-first managed platforms now operate in a league of their own. 2026 Trend Spotlight: AI is Reshaping the Best Web Scraping Tools

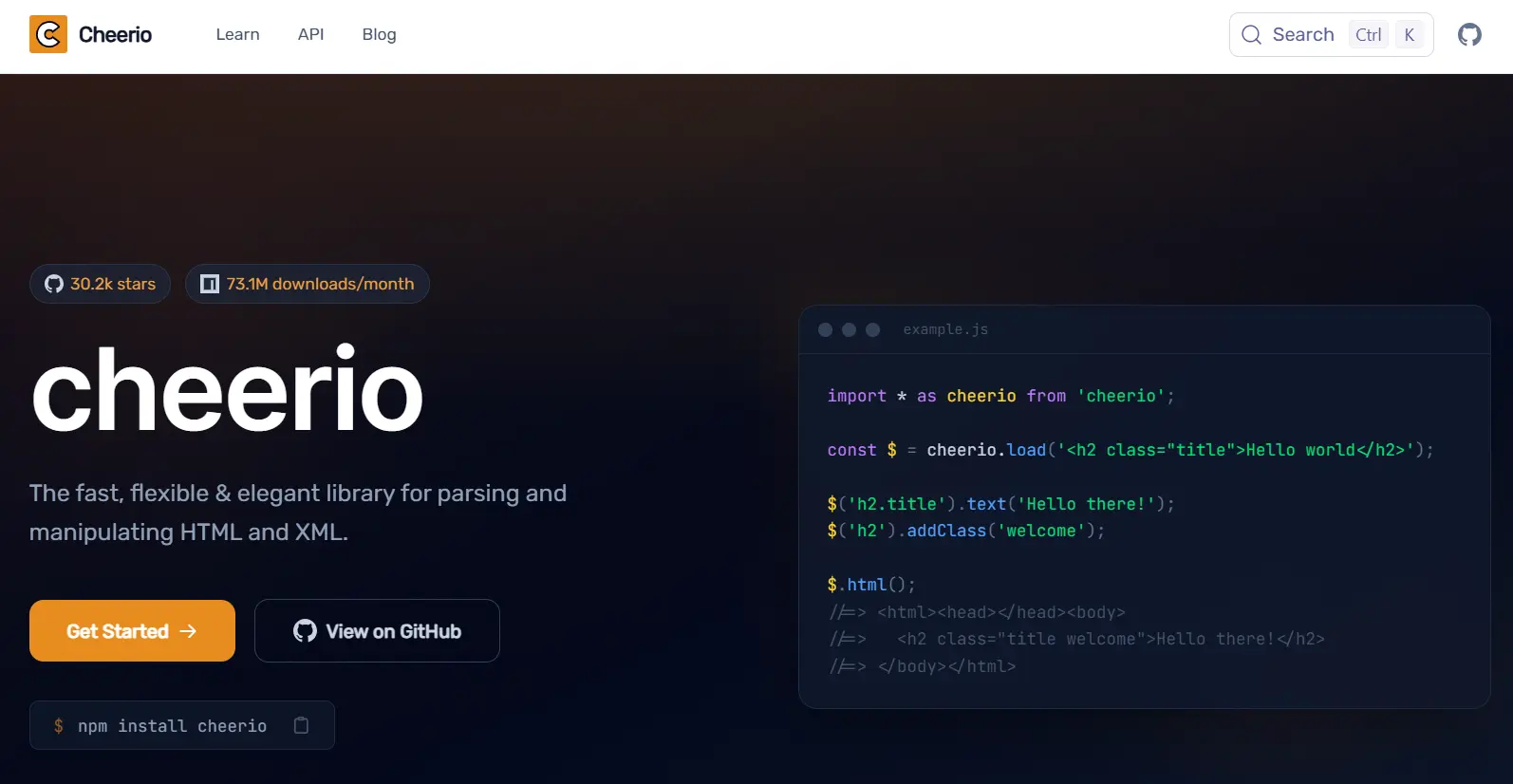

8. Cheerio

Cheerio is a lightweight JavaScript library for parsing HTML and XML — ideal for web scraping scenarios where you don’t need a full browser environment and want maximum speed.

Features

- Familiar jQuery-style syntax for selecting and manipulating DOM elements.

- Extremely fast because it skips CSS rendering, image loading, and JavaScript execution entirely.

- Well-suited for parsing large HTML documents with minimal memory overhead.

Pricing

Free and open-source.

9. Parsehub

Parsehub is a user-friendly web scraping tool that handles sites with complex interactivity — things that trip up simpler tools. Its machine learning-powered parser makes short work of pagination, dynamic content, and nested navigation.

Features

- Handles JavaScript, AJAX, cookies, sessions, and automatic redirections natively.

- ML-powered extraction engine for complex site structures, with output in JSON, CSV, Google Sheets, or via API.

- Manages infinite scroll pages, pop-up dialogs, and dropdown menus.

- Native Tableau integration for teams that visualize their scraped data.

Pricing

Free plan: 5 public projects, 200 pages per run. Standard plan: $189/month for 20 private projects and up to 10,000 pages per run.

10. Web Scraper.io

Web Scraper.io is a browser-native scraping tool that lives as a Chrome or Firefox extension — making it one of the quickest best web scraping tools to get started with, no installation required beyond the extension itself.

Features

- Intuitive point-and-click interface directly within your browser.

- Full JavaScript execution, Ajax request handling, pagination, and infinite scroll support.

- Flexible site map builder using multiple selector types.

- Export to CSV, XLSX, and JSON, or push directly to Dropbox, Google Sheets, or Amazon S3.

Pricing

The browser extension is free. Cloud plans with additional capabilities and parallel task support start at $50/month and scale beyond $200/month.

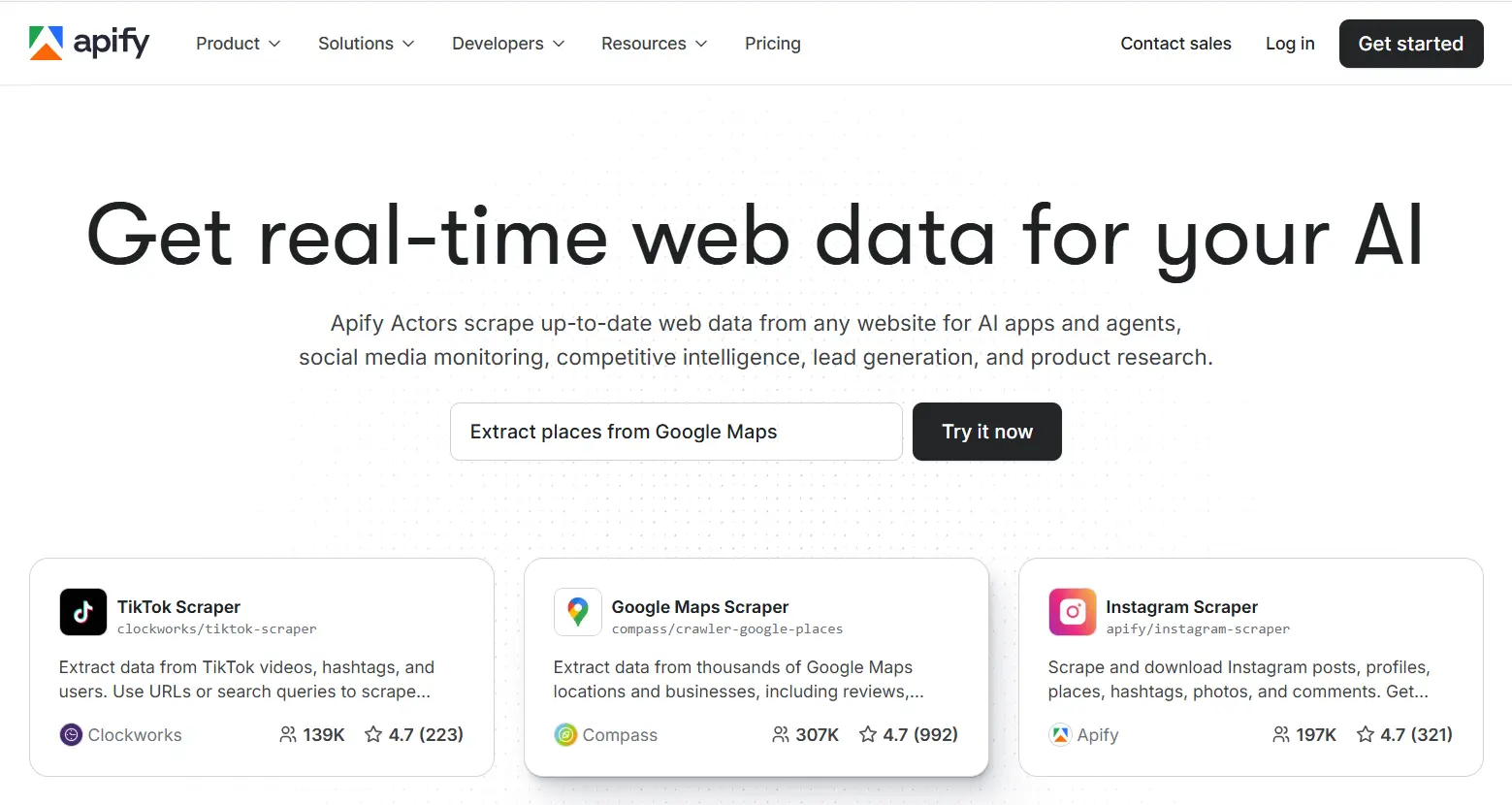

11. Apify

Apify is a cloud-based web scraping and automation platform with a strong ecosystem of ready-made “Actors” — pre-built scrapers you can deploy immediately for common websites and use cases.

Features

- Visual, no-code scraper builder via drag-and-drop.

- Extensive public Actor library covering popular platforms and websites.

- Flexible actor system supports custom scraping logic and automation workflows.

- Native integrations with Zapier, Google Sheets, and Slack for end-to-end pipelines.

Pricing

Free plan available with limited compute. Paid tiers range from individual plans to custom enterprise contracts based on usage.

12. Browse AI

Browse AI is an AI-native web scraping platform that takes a training-based approach — you show it what data you want once, and it learns to extract it reliably at scale. It handles modern anti-bot systems without requiring developer configuration.

Features

- Autonomous bypass of advanced bot detection without manual proxy setup.

- Robust API allows seamless integration with downstream applications and pipelines.

Pricing

Free trial available. Paid plans start at $19/month with tiered resource allocations.

13. SerpAPI

SerpAPI is purpose-built for one specific and highly valuable use case: extracting structured data from search engine results pages (SERPs). If your data needs revolve around SEO, paid search, or keyword intelligence, it’s one of the best web scraping tools for that vertical.

Features

- Extracts organic results, paid ads, featured snippets, titles, URLs, and more from Google, Bing, and DuckDuckGo.

- Keyword rank tracking across engines and geographic locations for SEO monitoring.

- Real-time SERP data via a clean, well-documented API.

Pricing

Free plan with limited requests. Developer plans start at $75/month.

14. Clay.com

Clay.com approaches web scraping from a go-to-market angle — it’s designed specifically to help sales and marketing teams pull structured data from the web without needing engineering support.

Features

- Point-and-click data selection with no code required.

- Automated scheduling ensures your prospect and enrichment data stays fresh.

- Flexible export in CSV, JSON, and Excel.

- Native integration with Google Sheets and Zapier for workflow automation.

Pricing

Tiered plans for individuals through enterprise, priced by feature access and usage volume.

15. Selenium

Selenium is an open-source tool for automating web browsers, often used by experienced developers for web scraping and data extraction. It supports Python, Java, C#, Ruby, and more.

Features

- Direct control of Chrome, Firefox, and Edge for precise, script-driven scraping.

- Full JavaScript execution for accessing dynamic content and data loaded via API calls.

- Headless mode enables background scraping without opening a visible browser window.

- Highly customizable framework with decades of community support, plugins, and documentation.

Pricing

Free and open-source — though it requires meaningful setup effort and coding competence to use effectively.

Why ScrapeHero Stands Apart from Other Web Scraping Tools

Most of the best web scraping tools on this list are excellent at one thing. Scrapy is powerful but technical. Octoparse is approachable but limited at scale. Bright Data handles blocking well but can get expensive fast.

ScrapeHero Cloud occupies a different position entirely. It’s the rare platform that genuinely works for both a first-time user downloading Amazon pricing data on a free plan, and an enterprise team running continuous, large-volume crawls across dozens of sources simultaneously.

Where other web scraping tools require you to manage proxy rotation, CAPTCHA bypass, JavaScript rendering, and data pipeline maintenance yourself — ScrapeHero handles all of that as infrastructure. Your team spends time using data, not maintaining the systems that collect it.

For businesses that have outgrown DIY scraping tools but aren’t ready to build an in-house data engineering function, ScrapeHero’s managed web scraping service represents the most economical and operationally sensible path forward: clean, structured, scalable data — without the overhead.

Frequently Asked Questions

The best web scraping tools in 2026 include ScrapeHero Cloud, Bright Data, Octoparse, Scrapy, Playwright, and Apify. The right choice depends on your technical skill level, data volume, and whether you need a managed service or prefer to build your own solution.

Web data extraction tools are essential for automating data collection at scale, saving time, and enabling businesses to surface actionable insights without manual effort — especially as AI-powered scrapers now handle dynamic sites, bot detection, and unstructured data autonomously.

ScrapeHero Cloud is the top pick for non-technical users — it’s a no-code, point-and-click platform where you can download data from major websites in minutes without writing a single line of code. Octoparse and Browse AI are also solid no-code alternatives.

ScrapeHero Cloud combines enterprise-grade infrastructure with a genuinely beginner-friendly interface. Unlike most web scraping tools that require users to handle proxies, browser automation, or anti-bot evasion themselves, ScrapeHero manages all of that behind the scenes — so you get clean data without operational complexity.

Traditional web scraping tools rely on static CSS or XPath selectors that break when a website’s layout changes. AI web scrapers use LLMs and machine learning to understand page content semantically, automatically adapting to layout changes and reducing maintenance overhead by up to 85%.

Scraping publicly available data is generally legal in most jurisdictions, but legality depends on a website’s Terms of Service, the nature of the data, and how it’s used. Always check a site’s robots.txt and Terms of Service before scraping, and never collect private or personally identifiable information without proper authorization.

Self-healing scraping refers to AI-powered extraction systems that automatically detect and repair broken scrapers when a target website changes — no human intervention required. In 2026, this is one of the most important differentiators among the best web scraping tools, eliminating the recurring maintenance costs that make traditional scrapers expensive to operate.

Yes — web scraping is one of the primary methods for assembling large, high-quality training datasets for AI models. Platforms like ScrapeHero offer structured, scalable data pipelines specifically designed for AI training data acquisition at enterprise scale.

Most modern web scraping tools export data in CSV, JSON, Excel (XLSX), and XML. Many also offer native integrations with Google Sheets, Amazon S3, Dropbox, Airtable, and Notion for seamless downstream workflows.