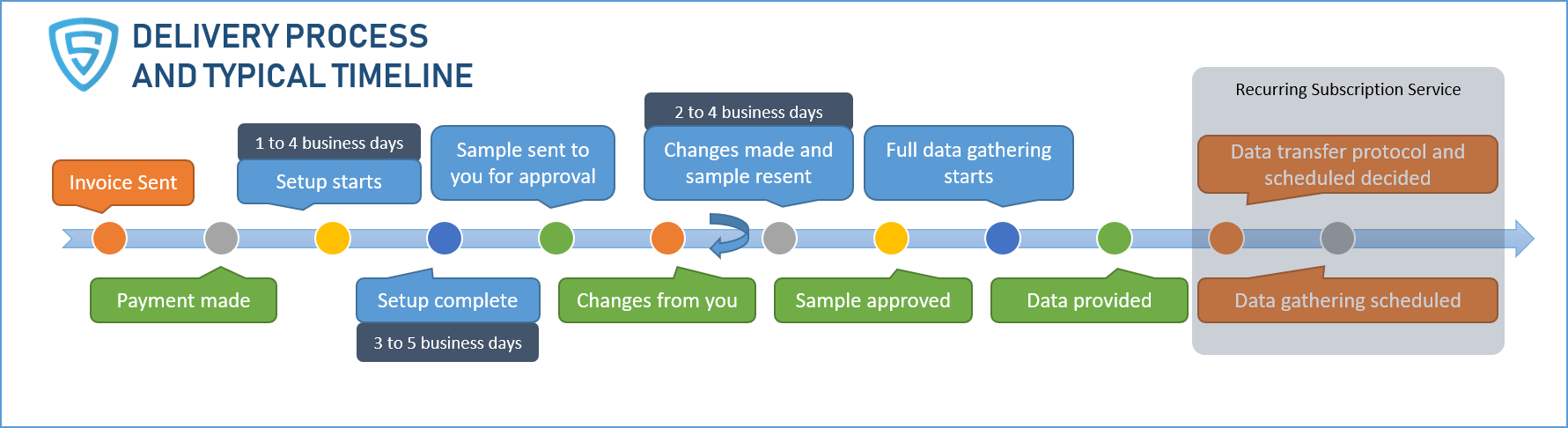

Our Delivery Process and Typical Timeline

1. Requirements

Once you reach out to us, we will collect your requirements by asking a few simple questions such as the data sources, frequency of data gathering and any custom processing that you might need. We may have a call to discuss the requirements if they are complex or gather the requirements over emails. Our goal is to make the process less burdensome for you.

2. Quote and Payment

We will review your requirements and send you a quote. Our pricing depends on the complexity of the source websites and the number of pages we scrape. Once you are okay with the pricing, we email you an electronic invoice that is easily payable online using any major credit card.

3. Setup and Sample Data

Once the payment is made, our automated workflow process kicks in. We will track your project using a well established and standard ticketing system. We will start creating custom scrapers for multiple types of pages on the site. Once the scrapers are built we will collect a sample data set and send it to you for your approval.

4. Sample Data Approval

If you require changes to the sample data set, we will make the changes and send you another sample. This may include changes to the data format, creating calculated fields, adjusting how some fields are parsed, etc. Typically, 5 iterations or less are sufficient for a majority of our projects to finalize the data format.

5. Full Data Gathering

Once you approve the sample data, we will start collecting the full data. The scrapers will run in our advanced and highly distributed data collection platform reaching speeds of thousands of pages per second depending upon the source website.

6. Quality Checks and Alerts

We will create tests for validating each record, perform spot checks on the dataset and set up QA checks to monitor the scraper’s meta data such as the number of pages it crawls, response codes etc. We invest heavily in Machine Learning and Artificial Intelligence to improve the detection of problems and provide you tremendous benefits for no additional cost compared to a provider that just bills for time and people.

7. Data Delivery

8. Recurring Data Delivery

If you need the data on a recurring basis, we create one or more schedules in our advanced fault tolerant scheduler, that ensures the data is gathered reliably.

9. Ongoing Support, QA and Maintenance

A critical benefit of our subscription plans is that we provide ongoing Support, Quality Assurance and Website Maintenance. Websites change their structure periodically and they also block automated access. As a result, basic scraper maintenance is also an integral part of our subscription service. We have built in health checks that alert us as a website’s structure changes so that we can make the changes and minimize the disruption to the data delivery. Also included are our automated and manual data quality checks. These are critical to provide a high level of data quality.