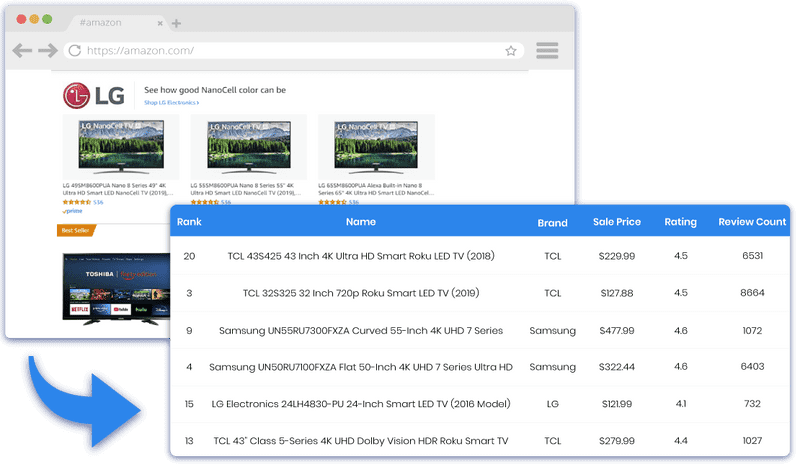

Scrape Product Data from Amazon Search Results & Categories

Extract product data from Amazon. Gather product data such as product name, ASIN, pricing, FBA, best seller rank, images, stock, full description, and 20+ data points from Amazon search results and product page.

We have done 90% of the work already!

It's as easy as Copy and Paste

Start

Provide search queries such as Smart Watches or any Search Results URLs to extract all product details from Amazon.

Download

Download the data in Excel, CSV, or JSON formats. Link your Dropbox to store your data.

Schedule

Schedule crawlers hourly, daily, or weekly to get updated data on your Dropbox.

One of the most sophisticated Amazon product search scrapers available

- Scrape Amazon search results page for Product Name, Keyword, Brand, ISBN, GTIN, or any other product identifiers

- Works for amazon.com, amazon.co.uk, and amazon.ca

- Built-in Brand filter to get specific product data

- Get data within minutes

Scrape Amazon based on multiple types of Input

Get Amazon product details in a spreadsheet by providing any of the following as inputs.

- Category or Search URL

- Product Name

- GTIN

- EAN

- UPC

- ISBN

- Part Number

- Keyword

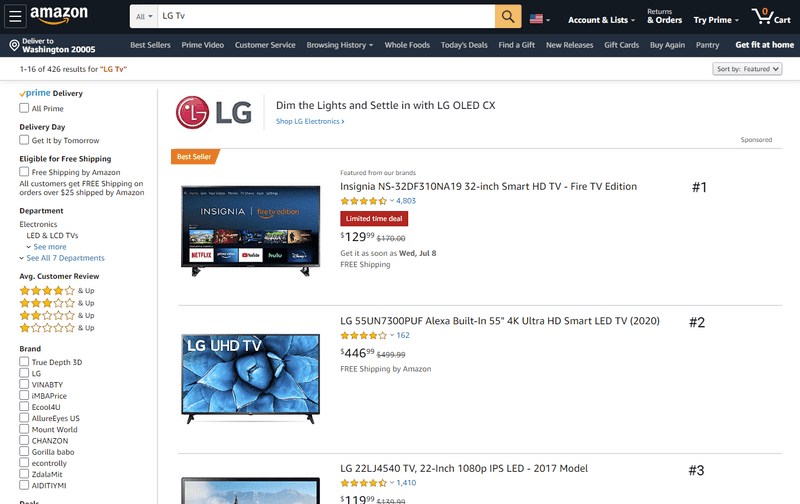

Monitor Amazon Product Search Result Ranking

Gather product ranking in the search results page for a particular keyword on Amazon within minutes.

- Get data in a few clicks

- Collect data periodically

- Deliver data to Dropbox

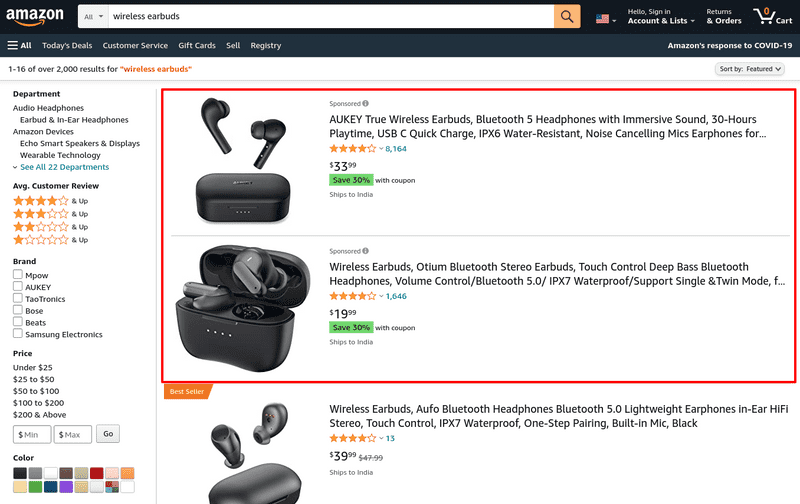

Get sponsored products for keywords on Amazon

Provide any keyword such as "wireless earbuds" and get all sponsored products along with search results.

- Find who is targeting your product keywords

- Get top advertisers in your category

Pricing

Easy to use and Free to try

A few mouse clicks and copy/paste is all that it takes!

No coding required

Get data like the pros without knowing programming at all.

Support when you need it

The crawlers are easy to use, but we are here to help when you need help.

Extract data periodically

Schedule the crawlers to run hourly, daily, or weekly and get data delivered to your Dropbox.

Zero Maintenance

We will take care of all website structure changes and blocking from websites.

Frequently asked questions

Can I start with a one month plan?

All our plans require a subscription that renews monthly. If you only need to use our services for a month, you can subscribe to our service for one month and cancel your subscription in less than 30 days.

Why does the crawler crawl more pages than the total number of records it collected?

Some crawlers can collect multiple records from a single page, while others might need to go to 3 pages to get a single record. For example, our Amazon Bestsellers crawler collects 50 records from one page, while our Indeed crawler needs to go through a list of all jobs and then move into each job details page to get more data.

Can I get data periodically?

Yes. You can set up the crawler to run periodically by clicking and selecting your preferred schedule. You can schedule crawlers to run on a Monthly, Weekly, or Hourly interval.

Can you build me a custom API or custom crawler?

Sure, we can build custom solutions for you. Please contact our Sales team using this link, and that will get us started. In your message, please describe in detail what you require.

Will my IP address get blocked while scraping? Do I need to use a VPN?

No, We won't use your IP address to scrape the website. We'll use our proxies and get data for you. All you have to do is, provide the input and run the scraper.

What happens to my unused quotas at the end of each billing period?

All our Crawler page quotas and API quotas reset at the end of the billing period. Any unused credits do not carry over to the next billing period and also are nonrefundable. This is consistent with most software subscription services.

Can I get my page quota back because I made a mistake?

Unfortunately, we will not be able to provide you a refund/page-credits if you made a mistake.

Here are some common scenarios we have seen for quota refund requests

- If there are any issues with the website that you are trying to scrape

- Mistaken or accidental crawling (this also includes scenarios such as "I was unaware of page credits", "I accidentally pressed the start button")

- Providing unsupported URLs or providing the same or duplicate URLs that have already been crawled

- Duplicate data on the website

- No results on the website for the search queries/URLs

How to get geo-based results like product pricing, availability and delivery charges to a specific place?

Most sites will display product pricing, availability and delivery charges based on the user location. Our crawler uses locations from US states so that the pricing may vary. To get accurate results based on a location, please contact us.

Why does the Amazon Search Result scraper return less no. of records compared to the website?

Amazon shows duplicate products on their listing page for most of the categories. Our scraper doesn't revisit such products to save your page credits.